The AI-Native Infrastructure & Data Strategy

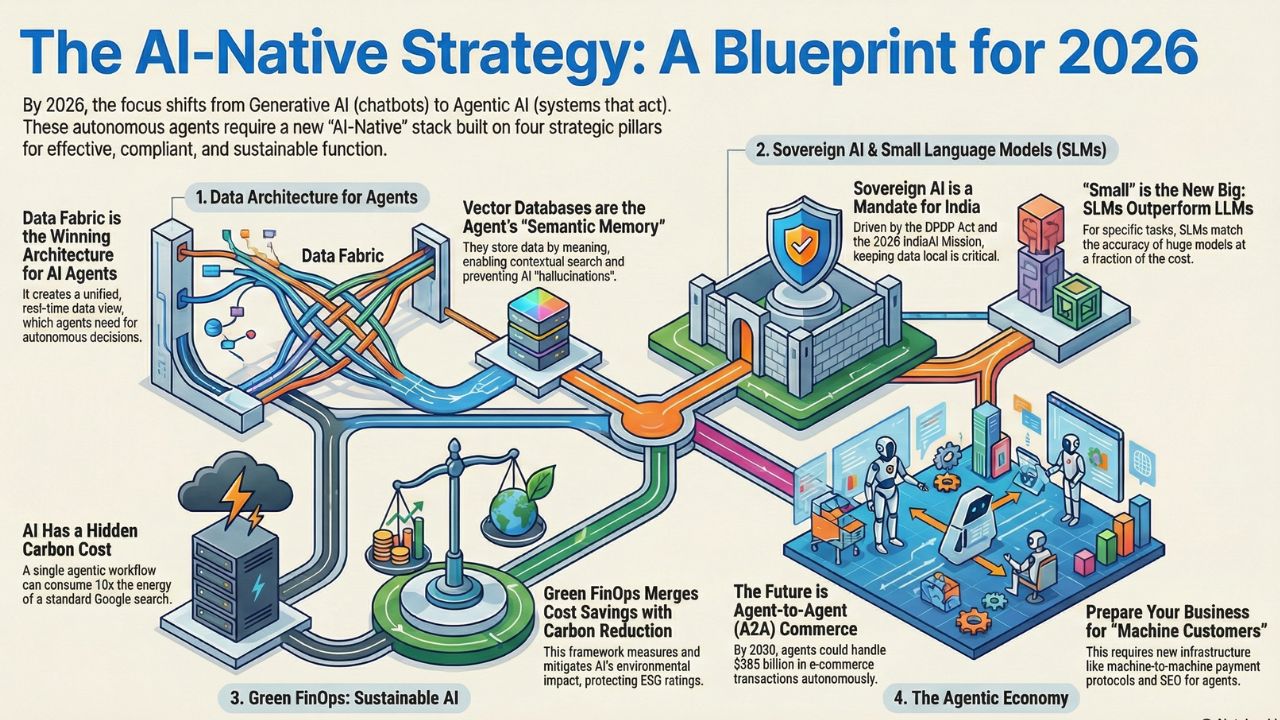

By 2026, the question for Indian technology leaders will no longer be "How do I use ChatGPT?" but "How do I build the infrastructure to support thousands of autonomous AI agents?"

We are moving from the era of Generative AI (chatbots that talk) to Agentic AI (systems that do). These agents will negotiate contracts, optimize cloud spend, and write code autonomously. But they cannot function on legacy infrastructure.

They require a new "AI-Native" stack: vector databases, decentralized data meshes, and sovereign compute layers that comply with India's strict DPDP Act. This hub, The AI-Native Infrastructure & Data Strategy, is the blueprint for CTOs and Architects.

It details the specific architectural decisions—from "Data Fabric" to "Green FinOps"—that will define the successful enterprise of 2026.

Explore the Core Pillars of AI Infrastructure

We have deconstructed the AI-Native stack into four critical strategic areas. Explore the sections below to architect your organization for the agentic future.

1. Data Mesh vs. Data Fabric: Architecting for Agentic AI

AI agents are only as intelligent as the data they can access. Traditional data warehouses are too slow and siloed for agents that need real-time context.

This section explores the battle between Data Mesh (decentralized ownership) and Data Fabric (automated integration) and why "Vector Databases" are the new gold standard for enterprise memory.

Key Topics:

- Vector database comparison (Pinecone vs. Milvus).

- RAG architecture best practices.

- Preparing unstructured data for LLMs.

Why it matters: If your agents can't find the data, they will hallucinate. Build the right foundation.

Read More: Data Mesh vs. Data Fabric for AI Discover the data architecture required to feed your autonomous agents2. Sovereign AI & Small Language Models (SLMs): The India Strategy

With the enforcement of India's Digital Personal Data Protection (DPDP) Act and the launch of the IndiaAI Mission in 2026, relying solely on public US-based models (like GPT-4) is a governance risk.

This section guides leaders on deploying Sovereign AI using Small Language Models (SLMs) like Llama 3 or Mistral running on your own private infrastructure.

Key Topics:

- Sovereign AI India strategy.

- Private AI infrastructure for banks.

- On-premise hardware requirements.

Why it matters: Reduce latency, cut API costs, and ensure 100% data residency compliance.

Read More: Sovereign AI & India Strategy Learn how to build secure, compliant AI systems within Indian borders3. Green FinOps: Measuring the Carbon Cost of AI Agents

AI is energy-hungry. A single agentic workflow can consume 10x the energy of a standard Google search. As "Sustainability" becomes a Board-level KPI, CTOs must measure and mitigate the carbon impact of their AI fleet.

This section merges FinOps (Cost) with GreenOps (Carbon) to create a sustainable AI strategy.

Key Topics:

- Sustainable AI computing.

- Measuring AI carbon footprint.

- Eco-friendly data center strategies.

Why it matters: Don't let your AI innovation ruin your ESG rating.

Read More: Green FinOps & Sustainable AI Frameworks for reporting and reducing the environmental cost of your AI4. The Agentic Economy: Preparing for Bot-to-Bot Commerce

What happens when your customer isn't a human, but an AI agent acting on their behalf? The "Agentic Economy" is the next frontier of digital commerce, predicted to hit $385 billion.

This visionary section explores how to optimize your digital presence for "Machine Customers"—from SEO for agents to automated negotiation protocols.

Key Topics:

- Agent-to-agent economy.

- Marketing to AI agents.

- Algorithmic commerce business models.

Why it matters: Prepare your business for a future where sales happen machine-to-machine.

Read More: The Agentic Economy & Bot Commerce Understand the future business models of the automated economy

Frequently Asked Questions (FAQ)

A: Generative AI (like ChatGPT) mostly requires inference APIs. Agentic AI requires a "stateful" infrastructure—memory (Vector DBs), tools (APIs), and permissioning layers—so the agent can remember past actions and execute tasks across systems.

A: Agents need to connect data from CRM, ERP, and Slack instantly. A Data Fabric uses metadata to weave these disparate sources together, allowing the agent to "see" the whole enterprise without manual integration.

A: Yes. By using open-source Small Language Models (SLMs) and local hardware (GPUs/NPUs), you can build a fully sovereign "Air-Gapped" agent system that never sends data to the public cloud, ensuring total DPDP compliance.

A: We recommend the "Cost per Outcome" metric. Instead of measuring token costs (technological), measure the cost to resolve a customer ticket or generate a report (business outcome) using your new stack.