LLM-as-a-Judge: How to Automate Your QA Pipeline (Python Tutorial)

You cannot manually inspect 10,000 interactions a day. If you are building AI Agents in 2026, you are likely facing the "Evaluation Bottleneck." Your developers are pushing code faster than your QA team can verify it.

The solution is LLM-as-a-Judge: a design pattern where a stronger model (like GPT-4o) acts as an impartial grader for your production model. This guide provides the exact Python code to build your own automated grading harness.

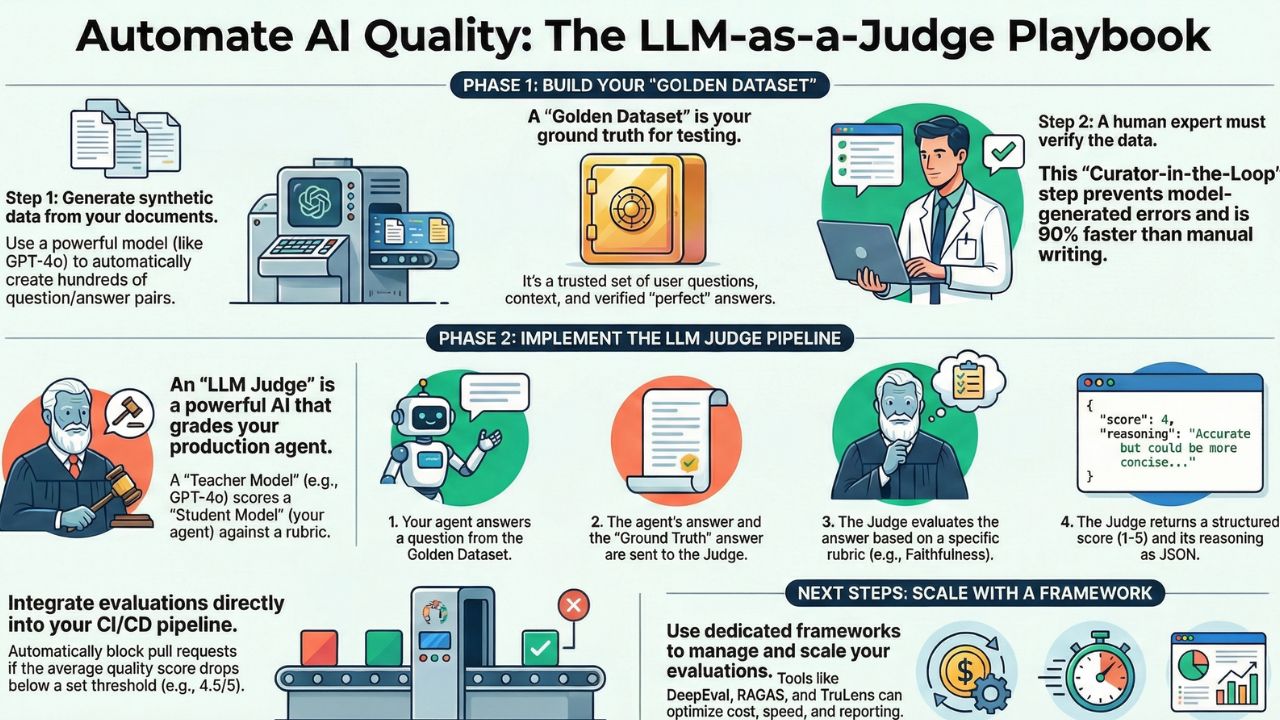

Prerequisite: Do you have a Golden Dataset? You need test data before you can build a judge. Read our guide on generating synthetic test cases.1. The Concept: Agents Grading Agents

The workflow is simple:

- You feed a question from your Golden Dataset to your Agent.

- The Agent generates a response.

- You pass both the Agent's Response and the Ground Truth to the "Judge Model".

- The Judge returns a Score (1-5) and a Reasoning string.

2. The Rubric (The Constitution)

An LLM is only as good as its instructions. We don't just ask "Is this good?"; we define specific metrics. Here is a standard Faithfulness Rubric.

3. The Implementation (Python)

We will use the OpenAI SDK with Structured Outputs (Pydantic) to ensure our judge always returns valid JSON. This is critical for CI/CD pipelines.

4. Scaling to Production

Once you have this function, you simply loop it over your CSV file of test cases. In a typical CI/CD pipeline, you would fail the build if the average_score drops below 4.5/5.

Note on Cost: Running GPT-4o on 500 test cases can cost $10-$20 per run. If this is too high for every commit, check our Comparison of RAGAS vs DeepEval to see tools that optimize these costs using "Cascading Eval" strategies.

Frequently Asked Questions

A: Yes, but Llama 3 70B is recommended over the 8B version for grading. Smaller models struggle with nuanced reasoning required to distinguish between 'Plausible' and 'Correct' answers.

A: LLMs often favor verbose answers (Length Bias). To counter this, force the judge to output a score first, or use a 'Reference-Based' rubric where the judge compares the output strictly to a Golden Answer.

A: It costs approximately $0.01 per evaluation run if you use optimized prompts. For large regression suites (1000+ tests), we recommend using GPT-4o-mini for the first pass and GPT-4o only for ambiguous cases.

References & Resources

- Judging LLM-as-a-Judge (arXiv) - The foundational paper on the reliability of AI graders.

- OpenAI Evals Cookbook - Official patterns for building evaluation harnesses.