How to Build a "Golden Dataset" for Agent Testing (Step-by-Step Guide)

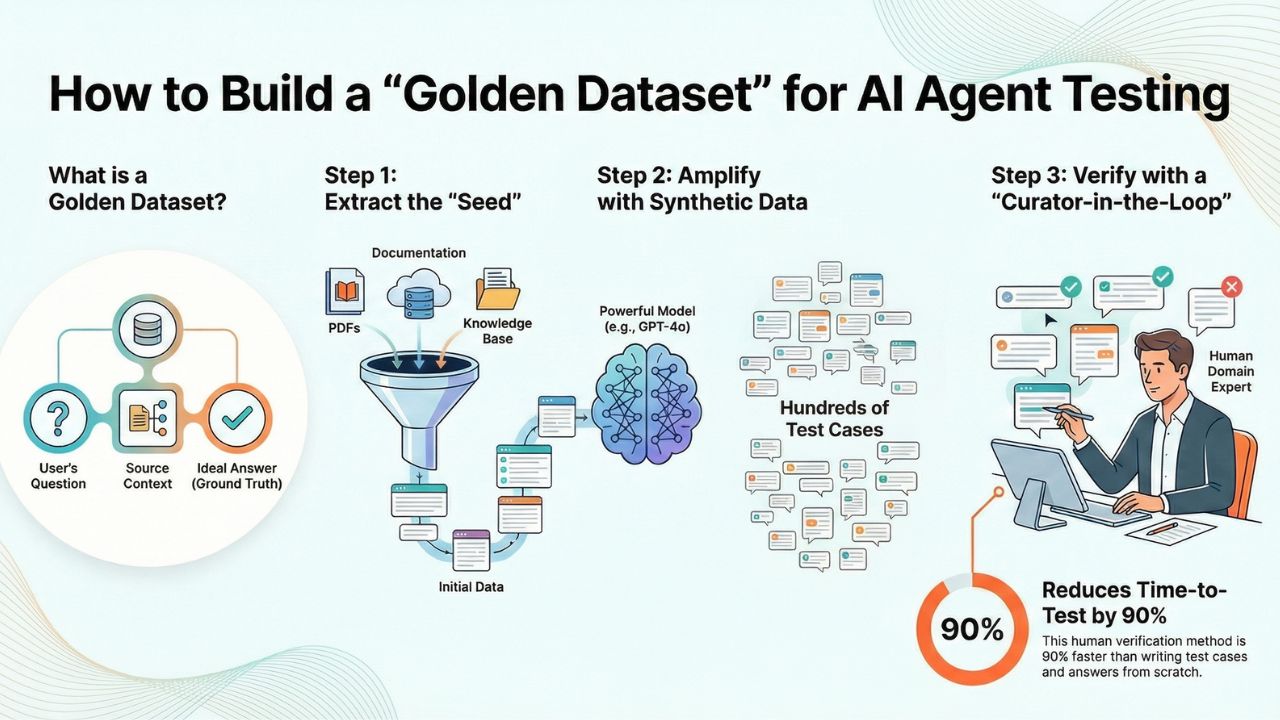

The biggest bottleneck in deploying Generative AI isn't the model—it's the Evaluation Gap. You cannot improve what you cannot measure. And to measure an AI Agent, you need a "Golden Dataset": a trusted set of Questions and Verified Answers (Ground Truth).

But manually writing 100+ QA pairs based on your documentation is tedious, unscalable, and prone to human error. In this guide, we will automate this using Synthetic Data Amplification.

What is a Golden Dataset?

In the context of RAG (Retrieval Augmented Generation), a Golden Dataset usually consists of three columns:

- Input: The user's query (e.g., "How do I reset my API key?").

- Context (Optional): The specific document chunk containing the answer.

- Ground Truth: The ideal, factually correct answer your agent should output.

The 3-Step Pipeline

Extract the "Seed"

Don't start from a blank page. Extract "Seed Data" from your existing knowledge base. This could be PDF chunks, Notion pages, or JSON exports of your product documentation.

Synthetic Amplification (The Code)

We will use a stronger model (like GPT-4o) to "read" your content and generate test cases for you. This ensures your tests cover edge cases you might miss.

Here is a Python snippet using standard libraries to generate a QA pair from a text chunk:

import openai

# The prompt that turns content into test cases

GENERATION_PROMPT = """

You are an expert QA Engineer.

Given the following context from a technical document, generate

a complex user question and the correct answer based ONLY on this text.

Context: {context_chunk}

Output Format:

Question: [Insert Question]

Ground_Truth: [Insert Answer]

"""

def generate_synthetic_data(context_chunk):

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": GENERATION_PROMPT.format(context_chunk=context_chunk)}]

)

return response.choices[0].message.content

The "Curator-in-the-Loop"

Crucial Step: Synthetic data can hallucinate. You must have a human (a Domain Expert) review the generated CSV file.

They don't need to write the answers, they just need to Verify them. This reduces the time-to-test by 90% compared to writing tests from scratch.

Next Steps: Running the Evals

Once you have this CSV file (your Golden Dataset), you are ready to feed it into an evaluation framework to automate your testing.

Next: Choose Your Evaluation Framework Read our comparison of RAGAS, DeepEval, and TruLens to see where to plug in your new dataset.

Frequently Asked Questions

A: Every time your underlying documentation or product features change significantly. Treat your Golden Dataset like software code—it requires versioning and maintenance.

A: You can (this is called "LLM-as-a-Judge"), but you still need a baseline of truth to compare against. Without a Golden Dataset, the judge has no reference point for factual accuracy.

A: Risky. If you automate the verification, you risk "Model Collapse" where errors verify errors. We recommend keeping a human in the loop for the Ground Truth creation, even if you automate the subsequent testing.

References & Further Reading

- Textbooks Are All You Need (Microsoft Research) - On the power of high-quality synthetic data.

- LangChain QA Documentation - Implementation details for RAG.

- OpenAI on Instruction Following - Best practices for prompting models to generate structured data.