Why Selenium is Dead: The Chief Quality Officer’s Guide to Testing AI Agents

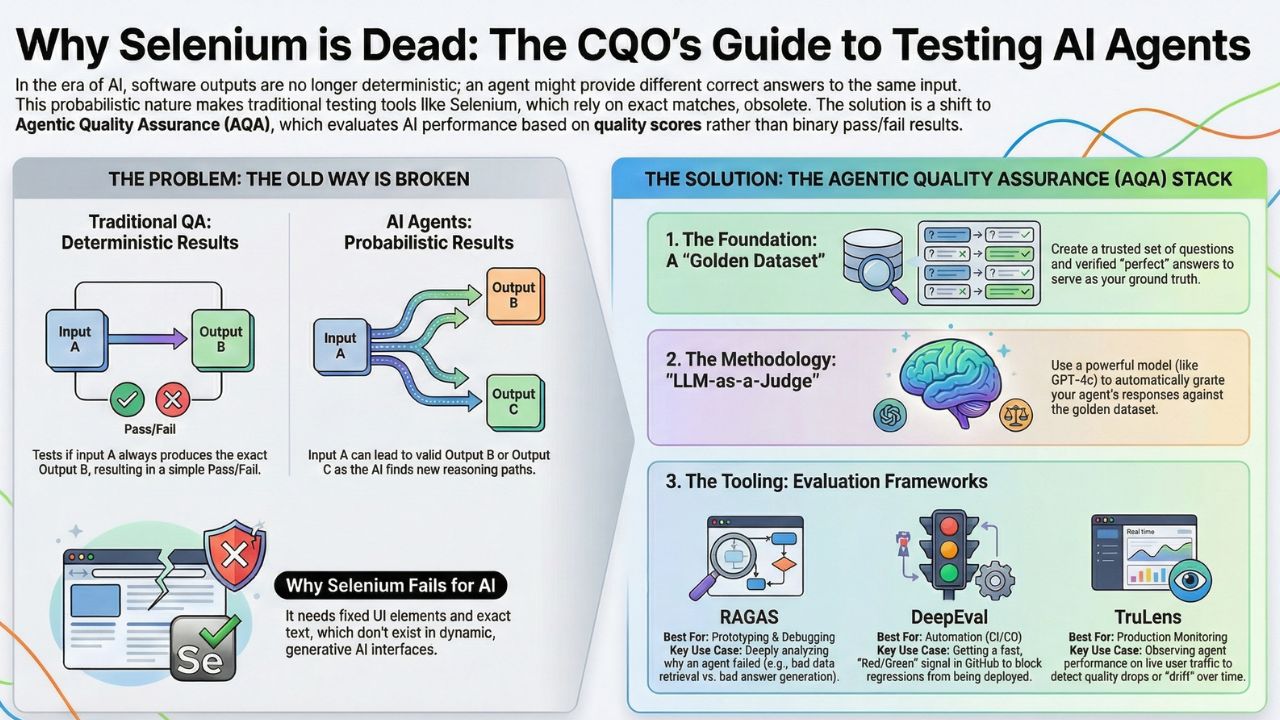

In the last era of software (Web 2.0), testing was simple: Input A always led to Output B.

If it didn't, the test failed.

In the Agentic AI era (2026), this logic is broken.

Input A might lead to Output B today, and Output C tomorrow—not because of a bug, but because the agent chose a different reasoning path.

This guide introduces Agentic Quality Assurance (AQA) and the shift to Eval-Driven Development (EDD).

Instead of writing binary "Tests" (Pass/Fail), we write "Evals"—scored assessments (0.0 to 1.0) that measure:

- Faithfulness: Did the agent hallucinate facts not present in the context?

- Answer Relevance: Did the agent actually answer the user's question, or just ramble politely?

- Agent Loop Efficiency: Did it solve the problem in 3 steps (cheap) or 30 steps (expensive)?

2. The New Stack: RAGAS, DeepEval, and TruLens

You cannot manually review 10,000 agent conversations in Excel. You need automated metrics that run in your CI/CD pipeline.

The market has consolidated around three major frameworks:

- RAGAS (Retrieval Augmented Generation Assessment): The industry standard for RAG. It mathematically scores your retrieval precision and generation faithfulness.

- DeepEval: The "PyTest for LLMs." It integrates directly into GitHub Actions to block "hallucinating" pull requests before they merge.

- TruLens: The Observability leader. Best for tracking your agent's performance in production to detect "drift" over time.

Strategic Advice: Don't just pick a tool; pick a metric.

- If your agent is customer-facing, optimize for Answer Relevance.

- If it is an internal legal bot, optimize for Faithfulness to prevent liability.

3. Methodology: Implementing "LLM-as-a-Judge"

The bottleneck in AI testing is the human. Humans are slow, expensive ($50/hour), and inconsistent.

The solution is LLM-as-a-Judge: using a highly capable "Teacher Model" (like GPT-4o) to grade the homework of a "Student Model" (like Llama-3).

The Workflow:

- The Student: Your specialized agent generates an answer.

- The Judge: A frontier model (GPT-4o) reviews the answer against a strict "Rubric" you define.

- The Score: The Judge assigns a score (1-5) and provides a reasoning for the grade.

(Insert Diagram: Visualizing the Student-Judge-Rubric Loop)

Get the Code: LLM-as-a-Judge Automation Guide A Python tutorial on automating your QA pipeline and replacing manual testing with AI agents.4. The Foundation: Building a "Golden Dataset"

You cannot evaluate an agent if you don't know what "Good" looks like.

A Golden Dataset is your "Ground Truth"—a collection of 50-100 high-quality Q&A pairs that represent perfect behavior.

The Trap: Do not write these manually. It takes too long.

The Fix: Use a Synthetic Data Generator to create 1,000 variations of user questions, then have your senior experts verify a sample subset. This becomes your regression test suite that runs every night.

Step-by-Step Guide: How to Build a "Golden Dataset" Learn to generate "Ground Truth" data using synthetic tools and human-in-the-loop verification.

5. Frequently Asked Questions (FAQ)

A: Selenium relies on "Selectors" (CSS/XPath) and exact text matches. AI interfaces are dynamic (Generative UI), and the text output changes every time. Selenium tests would be "flaky" 90% of the time. Agentic QA uses semantic similarity, not string matching.

A: Traditional QA tests for correctness (Pass/Fail) on static inputs. Agentic QA tests for quality (0.0 to 1.0 scores) on dynamic inputs, measuring probabilistic factors like tone, relevance, and reasoning capabilities.

A: EDD is a methodology where developers write the "Evaluation Metric" (e.g., "The response must contain a citation from the PDF") before they write the agent's prompt. It ensures the agent is optimized for the specific business goal from Day 1.

A: You use a "Faithfulness" metric. This measures if the information in the agent's answer can be found solely in the retrieved context. If the agent claims a fact that is not in the source documents, it is flagged as a hallucination.