Algorithmic Management Ethics: A CIO’s Checklist for "Human-in-the-Loop" Governance

Target Audience: CIOs, CROs (Chief Risk Officers), and Legal Heads in India

In traditional management, if a human manager discriminates against a job applicant, you fire the manager. In Agentic AI management, if your autonomous hiring agent discriminates against 5,000 applicants because of a biased dataset, you don’t just have a personnel issue—you have a class-action lawsuit and a violation of India’s Digital Personal Data Protection (DPDP) Act.

As we shift to 2026, managing AI risks in decision-making is no longer optional. It is the primary responsibility of the Indian CIO.

This guide provides a practical, printable framework for human-in-the-loop governance. It ensures your digital workers operate with the same ethical standards as your human ones.

Back to the Hub: To see how these ethical agents fit into your team structure, read the Org Chart Guide. Read the Org Chart Guide2. The Core Principle: "Human-in-the-Loop" (HITL)

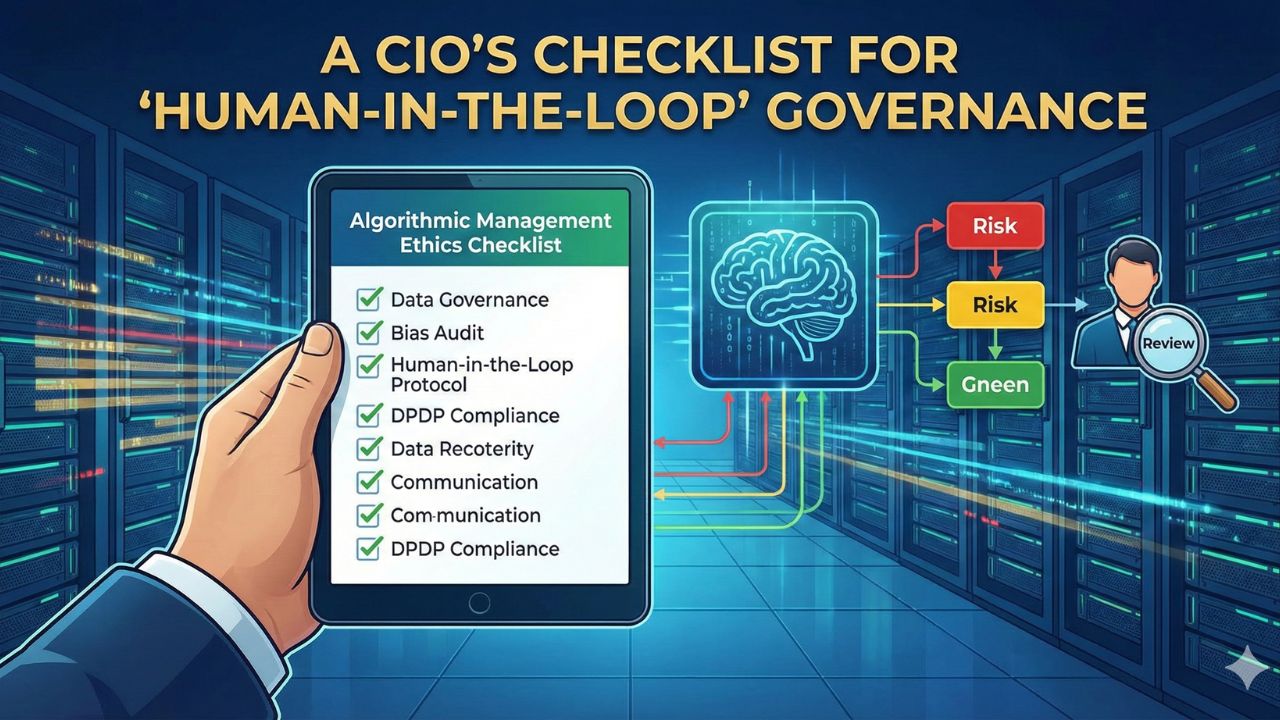

Human-in-the-Loop (HITL) is a governance protocol where AI models cannot finalize high-stakes decisions without human review.

When is HITL Mandatory?

Not every task needs a human. Use this "Traffic Light" system to decide:

- Red Zone (Mandatory HITL): Decisions affecting life, livelihood, or liberty.

Examples: Loan rejections, hiring/firing recommendations, medical diagnosis, insurance claim denials. - Yellow Zone (Audit Required): Decisions affecting customer experience or minor financial allocations.

Examples: Dynamic pricing, marketing segmentation, L1 support responses. - Green Zone (Fully Autonomous): Low-risk, reversible operational tasks.

Examples: Scheduling meetings, data entry, summarizing emails.

3. The CIO’s Checklist for 2026

Indian executives can use this algorithmic accountability checklist before deploying any Agentic AI workflow.

Phase 1: Data & Input Governance

Phase 2: The "Decision Logic" Audit

Phase 3: The Human Handoff Protocol

4. Specific Use Cases: Algorithmic Bias in HR & Finance

Case A: Algorithmic Bias in HR (Hiring Agents)

The Risk: An AI agent trained on 10 years of resumes filters out candidates with career gaps (often women returning to work) or candidates from non-premium colleges.

The Fix:

- Blind Resume Processing: Program the agent to ignore names, genders, and locations during the initial screening.

- Outcome Monitoring: Weekly reports comparing the demographic mix of applicants vs. shortlists.

Case B: Lending Bias (Fintech Agents)

The Risk: An agent charges higher interest rates to pincodes associated with lower-income demographics, creating a "digital redlining" effect.

The Fix:

- Proxy Variable Removal: Ensure the model isn't using "Pincode" as a proxy for "Creditworthiness."

- Fairness Metrics: Use metrics like "Demographic Parity" to audit approval rates across different user segments.

5. Governance Structure: The AI Ethics Board

By 2026, mature Indian organizations will establish an internal AI Ethics Board.

- Who sits on it? CIO, Head of HR, Chief Legal Officer, and an external Ethics Consultant.

- What do they do? They review "Red Zone" AI deployments. No high-risk agent goes live without their sign-off.

- Frequency: Quarterly audits of all live AI agents.

6. Frequently Asked Questions (FAQ)

A: Yes. If you are processing the data of Indian citizens, you (the Data Fiduciary) are responsible for compliance, regardless of where the AI model (Data Processor) is hosted.

A: Maintain a "Model Card" for every agent. This document lists: Who built it, what data was used, its intended purpose, its known limitations, and the date of its last bias audit.

A: Absolutely. Under Indian law, the legal entity deploying the technology holds liability. "The algorithm did it" is not a valid legal defense.

7. Sources & References

- The 2026 AI Compliance Framework: Copyright, Data Privacy, and Security

- Reserve Bank of India (RBI): "Regulatory Framework for Digital Lending" – Guidelines on algorithmic lending.

- NASSCOM: "Responsible AI for India" – Frameworks for ethical AI adoption in Indian IT.

- IBM Policy Lab: "Precision Regulation for Artificial Intelligence" – Concepts on risk-based regulation (Red/Yellow/Green zones).

- Harvard Business Review: "A Leader’s Guide to AI Ethics" – Best practices for creating AI ethics boards.