The 2026 DPDP Compliance Checklist for AI Agents & Autonomous Swarms

For Program Managers and Compliance Officers, the Digital Personal Data Protection (DPDP) Act is no longer a theoretical policy document—it is a functional requirement. With the Act fully enforceable as of January 2026, the era of "move fast and break data" is over.

This checklist operationalizes the legal text into engineering tasks. Use this to audit your "Agent Swarms" before they go into production.

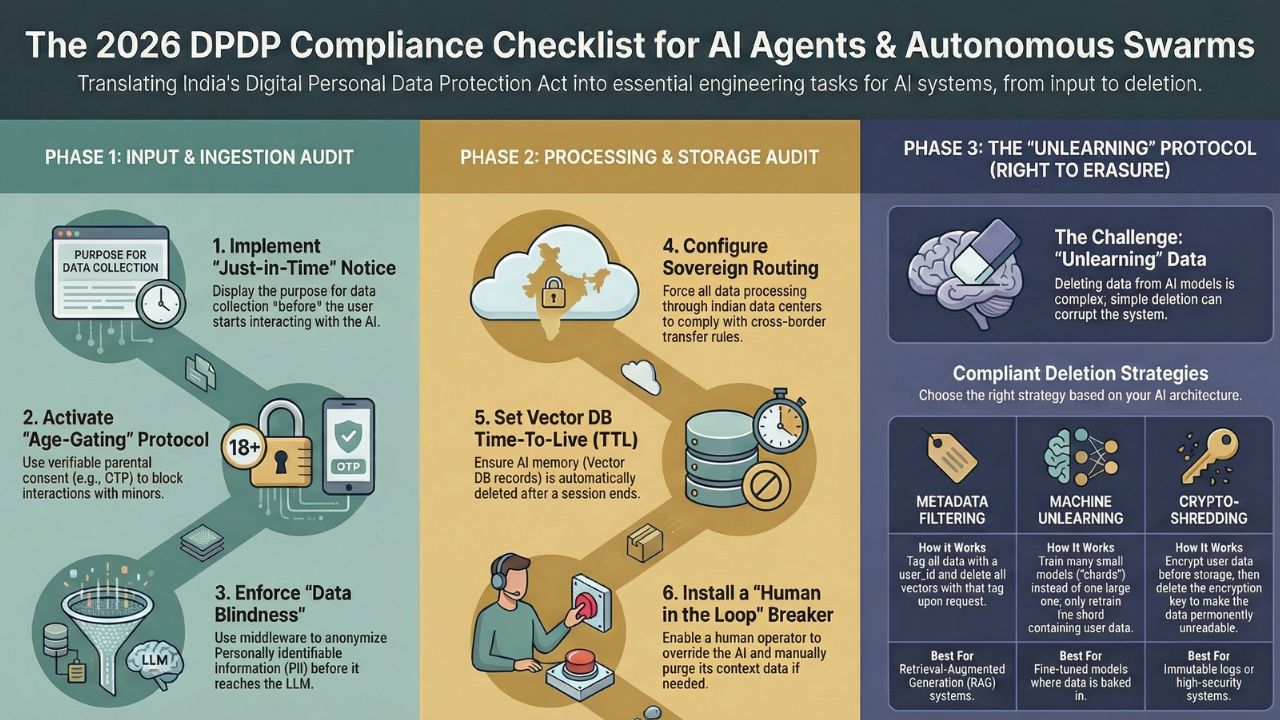

Phase 1: Input & Ingestion Audits

1. The "Data Blind" Test

Can your agent fulfill the user's intent without seeing raw PII? Action: Implement middleware (like Microsoft Presidio) that replaces names/emails with tokens (e.g., `User_123`) before the prompt hits the LLM.

2. The "Age-Gating" Protocol (Section 9)

Does the agent interact with the public? If yes, it must filter out minors. Action: Integrate a "Verifiable Parental Consent" handshake (via DigiLocker or OTP) before the chat session initializes.

3. The "Just-in-Time" Notice

Are you collecting data without warning? Action: The agent must display a "Purpose Notice" (Why we need this data) and a link to the Consent Manager before the user types their first prompt.

Phase 2: Processing & Storage Audits

4. Sovereign Routing (Cross-Border)

Where is the inference running? Action: Configure your LLM endpoints (OpenAI/Azure/AWS) to force routing to India Central (Mumbai) or South (Hyderabad) regions. Block fallback to US-East.

5. Vector Database TTL (Time-To-Live)

Is the agent's memory permanent? Action: Set a strict TTL on Vector DB indexes. Ensure that session history is flushed after the ticket is resolved, unless explicit consent for "Training" is given.

6. The "Human in the Loop" Circuit Breaker

Can the agent be stopped? Action: Ensure a human operator can override the agent and manually purge context data if the agent begins hallucinating or spiraling.

Phase 3: The "Unlearning" Protocol

Compliance doesn't end when the chat closes. The "Right to Erasure" (Section 12) means you must be able to delete the user's influence on your model.

- Vector Deletion: Ensure you are tagging all vectors with a `user_id` metadata field for instant deletion.

- LoRA Unlearning: If you fine-tuned a model on user data, have a rollback plan to revert to a base checkpoint if a significant erasure request comes in.

Frequently Asked Questions (FAQ)

No. Under the DPDP Act, consent must be "granular, specific, and revocable." For AI agents, this means using a Consent Manager framework where users can view and revoke specific tokens via a dashboard.

Yes. Employees are "Data Principals" too. If an internal HR bot processes employee health or financial data, it requires the same level of security and consent as a customer-facing bot.

The Act places the burden of proof on the Data Fiduciary. You must implement "verifiable" consent mechanisms (e.g., Tokenized Aadhaar or DigiLocker verification) rather than relying on self-declaration.

Sources & References

- MeitY Official Gazette: The Digital Personal Data Protection Act, 2023.

- AWS Compliance: Data Privacy and Protection in India (Availability Zones).

- NITI Aayog: Data Empowerment and Protection Architecture (DEPA) Framework.

- Microsoft Presidio: Open source SDK for PII anonymization and masking.