Architecting Consent Managers for the Agentic Economy (DEPA & AA)

For Enterprise Architects, the challenge of the DPDP Act isn't just compliance—it's interoperability. As we move toward "Bot-to-Bot" commerce (e.g., your Procurement Agent negotiating with a Supplier Agent on ONDC), human-speed "clickwrap" agreements are obsolete.

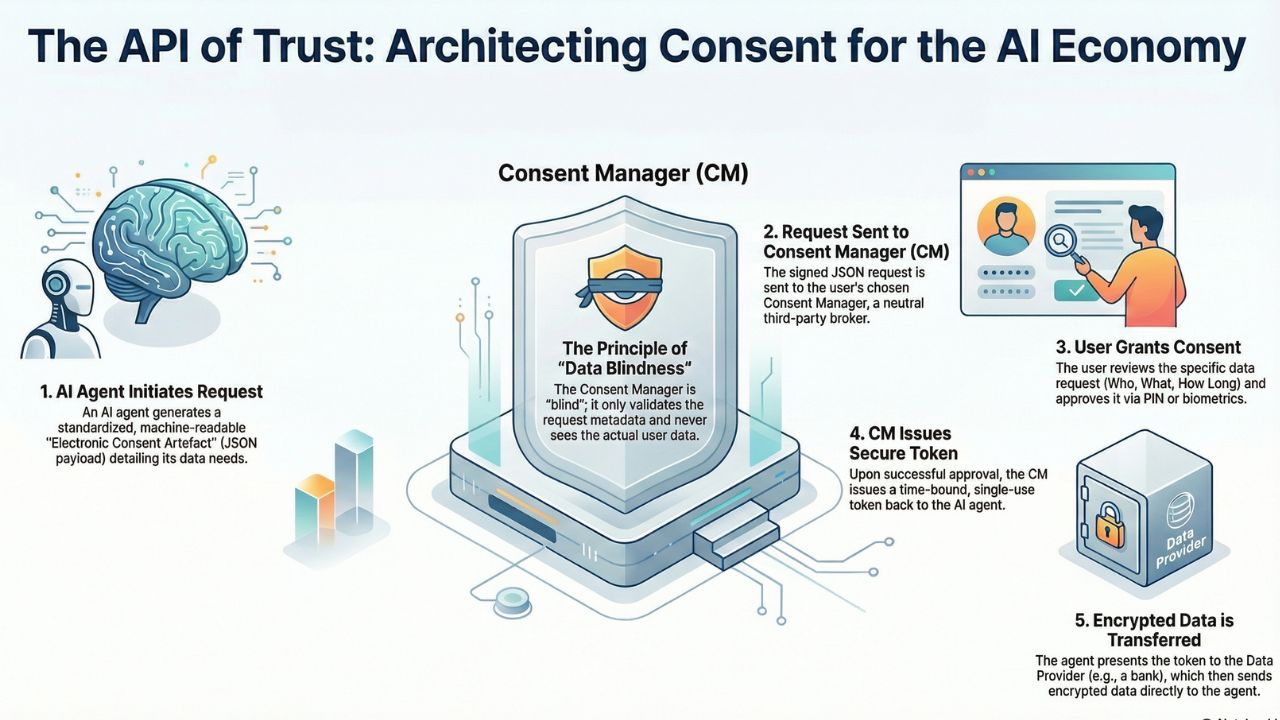

We need an API-driven, machine-readable consent layer. This guide breaks down how to implement the Data Empowerment and Protection Architecture (DEPA) to enable your AI agents to legally request and grant data access.

1. DEPA 101: The "Techno-Legal" Backbone

DEPA inverses the traditional model. Instead of data being "owned" by the app that collected it, the data belongs to the user (Data Principal). Access is brokered by a new class of entity: the Consent Manager (CM).

In an AI context, your Agent acts as the "Information User" (IU). To get data (e.g., a credit history or health record) to process a request, it cannot just scrape a database. It must send a structured Electronic Consent Artefact to a CM.

2. The "Electronic Consent Artefact" (JSON Schema)

The core of this architecture is a standardized XML/JSON schema defined by MeitY and the Account Aggregator (AA) ecosystem. When your AI Agent requests data, it must generate a signed payload containing these parameters.

Code Snippet: Mock Consent Request (ONDC Context)

Below is a simplified JSON payload that an AI Sales Agent might send to a User's Consent Manager to access "Purchase History" for personalization.

{

"ver": "1.1.3",

"timestamp": "2026-01-03T10:00:00Z",

"txnid": "a1b2c3d4-agent-req-001",

"ConsentDetail": {

"consentStart": "2026-01-03T10:00:00Z",

"consentExpiry": "2026-01-04T10:00:00Z",

"consentMode": "VIEW",

"fetchType": "ONETIME",

"consentTypes": [

"PROFILE",

"TRANSACTION_HISTORY"

],

"purpose": {

"code": "101",

"refUri": "https://api.agilewow.com/purpose/personalization",

"text": "To customize product recommendations based on past purchases."

},

"DataLife": {

"unit": "HOUR",

"value": 1

},

"DataConsumer": {

"id": "agent-swarm-alpha"

}

},

"signature": "hmac-sha256-signed-by-agent-private-key"

}Note: The `DataLife` parameter is critical for AI. It limits how long the agent can retain this data in its context window (RAM).

3. Data Blindness: The Security Model

A common misconception is that the Consent Manager "sees" the data. It does not. This is the principle of "Data Blindness."

- The Flow: The CM validates the user's PIN/Biometric. If successful, it issues a "Token."

- The Transfer: Your AI Agent takes this Token and presents it to the Information Provider (e.g., the Bank).

- The Encryption: The Bank encrypts the data using your Agent's Public Key. The encrypted packet flows through the CM (or directly) to the Agent. Only the Agent (with the Private Key) can decrypt it.

Frequently Asked Questions (FAQ)

Data Empowerment and Protection Architecture (DEPA) is a techno-legal framework that allows users to securely share their data across providers (like banks or hospitals) through a neutral third-party Consent Manager.

AI agents act as "delegates" for the human user. The agent uses a scoped API key or a cryptographic key pair (linked to the user's DigiLocker) to sign the JSON consent request, which is then validated by the Consent Manager.

The Consent Manager (CM) must never see the actual data being transferred. It only sees the metadata (Who, What, How Long). The data itself flows directly from the Information Provider (IP) to the Information User (IU) via an encrypted tunnel.

Sources & References

- Sahamati: The Collective of the Account Aggregator Ecosystem.

- ReBIT (RBI): API Specifications for Account Aggregators.

- NITI Aayog: Data Empowerment and Protection Architecture (DEPA) Strategy Paper.

- ONDC: Open Network for Digital Commerce (Architecture for Agentic Commerce).