Why Bypassing Character AI Age Restrictions is Fatal (March 2026)

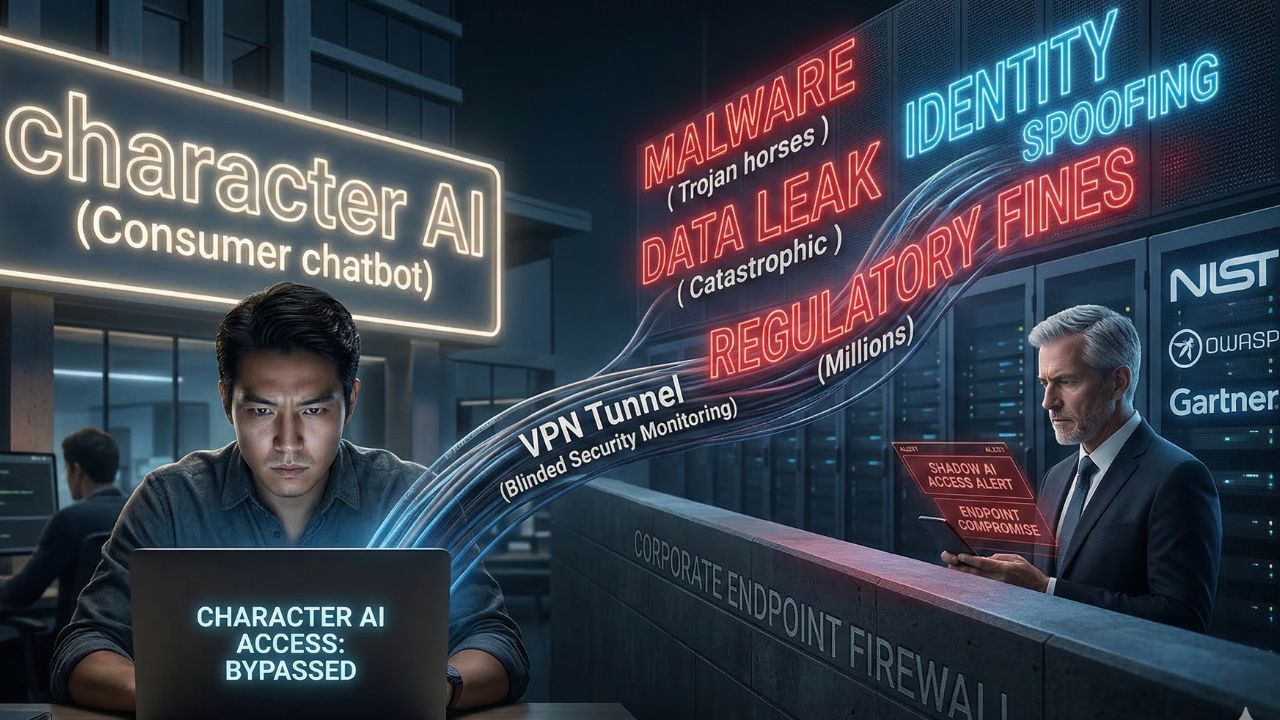

- Attempting a workaround exposes your network to severe malware and privacy risks.

- Unrestricted AI models bypass corporate firewalls, introducing severe data leak threats into agile workflows.

- Relying on consumer AI workarounds destroys your enterprise data sovereignty and compromises endpoint security.

- Employees bypassing AI guardrails introduce catastrophic network vulnerabilities into your system daily.

- Agile leaders must secure enterprise endpoints and lock down shadow IT risk today.

In the fast-paced world of Agile development and AI implementation, teams often seek shortcuts to accelerate their sprints. A surprisingly common and dangerous query echoing through enterprise networks today is how to bypass character ai age restriction.

While developers or product managers might view consumer-grade chatbots as harmless brainstorming tools, accessing them outside of approved corporate channels is a critical security failure. When your team circumvents these fundamental safety gates, they bypass essential enterprise security layers.

To properly secure your digital perimeter, organizations must implement a rigorous, standardized framework for character ai age verification. This deep dive explores why ignoring these protocols is not merely a policy violation, but a fatal flaw that can compromise your entire network infrastructure.

The Hidden Dangers of Searching How to Bypass Character AI Age Restriction

When an employee attempts to skirt age gates on consumer platforms, they are actively engaging in Shadow AI. This term refers to the unsanctioned use of artificial intelligence tools outside of IT's visibility and control.

Searching for how to bypass character ai age restriction often leads employees to dubious third-party forums, malicious scripts, and compromised browser extensions. These unauthorized workarounds are rarely secure.

Threat actors actively target users looking for these exploits, bundling malware with supposed "bypass" tools. Exposing your network to severe malware and privacy risks is the immediate result of these reckless actions.

Once a single endpoint is compromised, lateral movement across your corporate network becomes a trivial task for attackers.

The Breakdown of Agile Guardrails

In Agile and Scrum environments, velocity should never come at the expense of security. When team members use unauthorized AI tools to write code snippets, generate product requirements, or draft sprint retrospectives, they feed sensitive corporate data directly into unfiltered consumer models.

Unrestricted AI models pose a data leak threat because they routinely ingest user inputs to train future iterations of their software. If your product manager uploads proprietary architecture diagrams into a bypassed consumer AI, that intellectual property is no longer under your control.

Corporate Firewall Bypass and VPN Vulnerabilities

To access restricted consumer AI tools, employees often employ virtual private networks (VPNs) or proxy servers. However, VPNs are not inherently safe to use for bypassing AI filters, especially when installed as unvetted third-party applications.

These unauthorized networking tools punch holes directly through your meticulously configured corporate firewalls. Employees bypassing AI guardrails introduce catastrophic network vulnerabilities into your system daily by rendering your data loss prevention (DLP) protocols entirely blind.

The Illusion of Anonymity

Many users mistakenly believe that utilizing a VPN or creating synthetic identities protects their data. However, identity spoofing against AI systems is the dangerous new frontier of corporate fraud.

Security leaders must audit their systems and transition toward secure, dedicated open-source models instead of relying on compromised consumer platforms.

Managing Rogue AI Agents in the Enterprise

As organizations transition toward Agentic AI—autonomous systems that execute complex, multi-step tasks—the threat landscape evolves. Rogue AI agents, deployed without proper oversight or age-gated restrictions, can inadvertently execute malicious code or leak credentials.

When an AI agent operates outside of established enterprise safety guardrails, it lacks the necessary context to handle sensitive information securely. This is why attempting to bypass access controls is fundamentally incompatible with enterprise risk management.

Zero Trust Architecture for AI

To combat this, Agile leaders and Product Managers must adopt a Zero Trust approach to AI access. Every prompt, API call, and model interaction must be verified.

You must lock down your shadow IT risk today by integrating robust identity verification APIs directly into your development pipelines. If your developers require powerful, unfiltered models for complex tasks, the solution is not bypassing public cloud gates.

Instead, organizations should empower their teams to run secure, private local LLMs instead.

The True Cost of a Shadow AI Data Breach

The financial and reputational damage of an AI-related data breach cannot be overstated. When corporate data is leaked through a consumer chatbot, the fallout extends far beyond a simple IT ticket.

Regulatory bodies are increasingly scrutinizing how organizations manage generative AI tools. Failing to enforce AI safety policies can lead to massive compliance violations, especially concerning frameworks like GDPR and COPPA.

If an audit reveals that your network was compromised because an employee successfully utilized a bypass script, the negligence penalties can be staggering.

Rebuilding Product Strategy

Rather than playing whack-a-mole with unauthorized bypass attempts, AI Leadership must address the root cause. If employees are desperately seeking consumer AI, your internal product suite is likely lacking.

Invest in enterprise-grade, localized AI solutions that offer the required velocity without sacrificing data sovereignty.

Conclusion

Securing your agile development environment requires a zero-tolerance policy for shadow IT workarounds. Attempting to figure out how to bypass character ai age restriction is not an act of innovation;

it is a critical security vulnerability that threatens your entire organizational infrastructure. Relying on consumer AI workarounds destroys your enterprise data sovereignty.

It is imperative that AI leaders enforce strict compliance, audit their endpoints, and lock down your shadow IT risk today.

Ready to secure your AI workflows? Explore our guide to enterprise-grade AI alternatives and protect your proprietary data from the risks of unfiltered LLMs.

Frequently Asked Questions (FAQ)

When employees bypass these restrictions, they immediately expose the corporate network to severe compliance and security threats. They often use malicious third-party tools that inject malware into endpoints, leading to unauthorized data exfiltration and complete breaches of enterprise data privacy protocols.

No, using unvetted VPNs introduces significant vulnerabilities. These tools actively circumvent corporate firewalls, blinding internal security monitoring. This creates an unmonitored tunnel where sensitive intellectual property can be leaked and external malware can be silently introduced into the network environment.

Bypassing guardrails typically requires downloading unverified scripts, fake identity generators, or compromised browser extensions. Threat actors specifically design these "bypass tools" as Trojan horses, delivering ransomware, keyloggers, and backdoor access directly onto secure corporate developer machines.

The true cost includes millions in regulatory fines, total loss of proprietary intellectual property, and severe reputational damage. Additionally, organizations face immense operational downtime while conducting forensic audits to determine exactly what corporate data was ingested by the public AI model.

Yes, bypassing these controls often violates global compliance laws like COPPA and GDPR. Organizations can face massive financial penalties from regulatory bodies if employee negligence results in consumer data being exposed to unfiltered, publicly accessible AI data lakes.

Sources and References

- NIST Artificial Intelligence Risk Management Framework (AI RMF): Provides guidelines for organizations to manage risks related to the deployment and use of generative AI systems, emphasizing the dangers of shadow IT.

- OWASP Top 10 for Large Language Models: Highlights the critical vulnerabilities associated with unrestricted LLM access, including data leakage, prompt injection, and unauthorized data ingestion.

- Gartner Research on Shadow AI: Details the growing enterprise threat of employees utilizing unsanctioned consumer AI applications and the required zero-trust security measures needed to combat it.