RAG vs Fine-Tuning AI Models: The Agile Leader's Guide to Secure Customization

Key Takeaways

- Fine-tuning an AI model when you should be using RAG burns through your compute budget and permanently exposes proprietary data.

- Understanding the technical differences between RAG vs fine-tuning an AI model is a mandatory, non-negotiable skill for modern Product Owners.

- Retrieval-Augmented Generation (RAG) is generally safer, cheaper, and faster for Agile teams to accurately estimate and implement during standard sprint cycles.

- The wrong architectural choice permanently leaks private enterprise data into the neural network's weights, creating massive compliance liabilities.

- Stop the bleeding and read this definitive technical comparison to ensure your next enterprise AI deployment actually scales.

Many Agile teams are making a catastrophic architectural mistake right out of the gate.

If your upcoming sprint involves modifying a foundational AI to "learn" your company's proprietary data, choosing the wrong developmental path can paralyze your entire product roadmap. Currently, your team is likely struggling with the RAG vs fine-tuning an AI model debate, and selecting the wrong option will leak your private enterprise data to unauthorized users.

Before diving into the complex technical specifics of model customization, Agile leaders must ground themselves in The AI Fundamentals for Scrum Masters and Product Owners. Without that comprehensive baseline, you are simply guessing at infrastructure requirements and pointing user stories blindly.

This deep dive will dissect the exact reasons why your current customization strategy might be failing, allowing you to salvage your compute budget, protect your users, and deliver measurable business value.

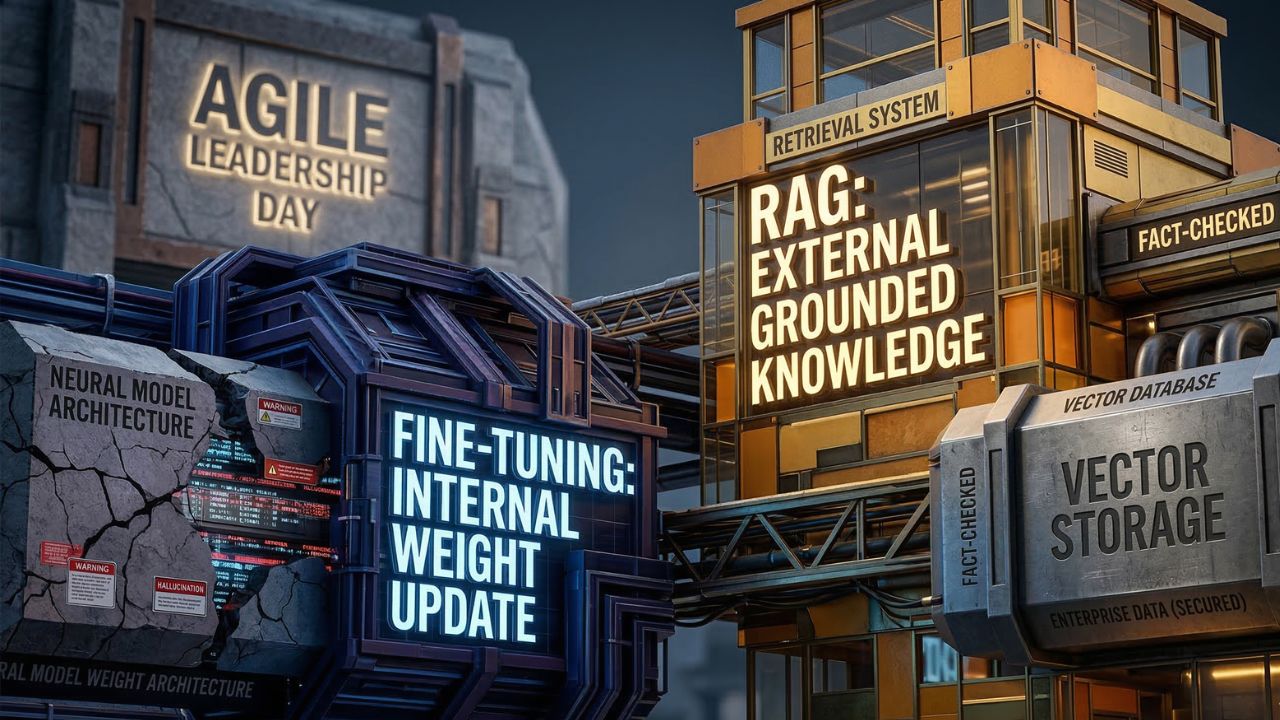

The Core Flaw in Evaluating RAG vs Fine-Tuning

The biggest misconception in modern AI product management is treating all machine learning customization as the exact same technical process. They are fundamentally different approaches to solving enterprise problems.

When stakeholders ask for an AI that "knows our company data," Product Owners often immediately write user stories for fine-tuning. This is a massive, expensive error.

The Costly Trap of Enterprise Fine-Tuning

Product Owners frequently fail to ask: How much does it cost to fine-tune an LLM?

The answer is that it is astronomical compared to standard API usage. Fine-tuning requires spinning up expensive GPU clusters, compiling massive, perfectly formatted datasets, and enduring extended sprint cycles that rarely fit into a standard two-week Agile window.

Furthermore, the privacy implications are severe. What are the data privacy risks of fine-tuning AI?

Once your proprietary data (like customer records or financial projections) is baked into the model's neural weights, it cannot be easily extracted, isolated, or deleted. If a malicious user skillfully prompts your fine-tuned model, it can inadvertently regurgitate sensitive corporate secrets. Managing this risk requires an entire suite of security protocols that most Agile teams fail to estimate.

The Agile Power of Retrieval-Augmented Generation (RAG)

In stark contrast, RAG keeps your enterprise data strictly separated from the foundational AI model. How do Scrum teams implement Retrieval-Augmented Generation?

By focusing their sprints on building robust external vector databases. When a user asks a question, the system searches your secure database for relevant, real-time information, retrieves it, and hands it directly to the AI to formulate a contextual answer.

This leads to a critical benefit: Does RAG effectively prevent AI hallucinations? Yes.

By strictly grounding the model's responses in factual, retrieved documents rather than relying on its hazy internal memory, RAG drastically reduces confident, fabricated answers. To build this architecture effectively, your engineering team must clearly define their components of a GenAI system.

If your orchestration layers, data pipelines, and vector storage are not properly mapped out in your product backlog, your RAG implementation will fail just as quickly as a botched fine-tuning job.

How to Sprint Plan for AI Customization

When planning your Agile sprints, the choice between RAG and fine-tuning dictates the entire structure of your user stories, your Definition of Done (DoD), and your team's capacity planning. You cannot story-point a fine-tuning epoch the same way you point a simple RAG database query optimization.

Pointing and Estimating RAG User Stories

RAG is inherently more Agile-friendly. The work can be easily sliced into vertical, deliverable increments that provide immediate value.

- Sprint 1: Data Infrastructure: Set up the vector database and establish secure data ingestion pipelines.

- Sprint 2: Embeddings: Implement the embedding models to chunk, format, and store enterprise documents accurately.

- Sprint 3: Orchestration: Build the middleware orchestration layer to dynamically connect the database to the LLM via API.

- Sprint 4: Prompt Engineering: Systematically optimize the prompt layer to reduce hallucinations and maximize context windows.

Because RAG relies on external databases, updating the AI's knowledge is as simple as updating the database. You do not need to retrain the model when your company policies or product prices change.

The Heavy Burden of Fine-Tuning Sprints

Fine-tuning, conversely, acts more like a monolithic waterfall project hidden inside an Agile framework.

Your sprints will be entirely consumed by grueling data engineering tasks. You must meticulously curate tens of thousands of perfect prompt-and-response pairs to teach the model how to behave. If the data is flawed, the model is ruined, requiring a complete, highly expensive retraining cycle. This makes traditional sprint velocity tracking nearly impossible for Scrum Masters.

Can You Blend Both Strategies?

As your AI product matures, you will inevitably ask: Can you use both RAG and fine-tuning together?

Absolutely, and this represents the pinnacle of enterprise AI architecture. In a hybrid system, you use fine-tuning strictly to alter the AI's tone, personality, and output format so it speaks perfectly in your brand's unique voice. You then use RAG to supply that highly customized model with the actual, real-time factual data it needs to answer questions accurately.

This sophisticated, dual approach ties directly into deeply understanding your overarching AI machine learning approaches. By mastering both concepts, Product Owners can allocate their sprint capacity efficiently, knowing exactly when to train the model and when to simply feed it better context.

Conclusion: Securing Your Enterprise Architecture

Agile leadership in the age of AI requires ruthless prioritization and deep technical awareness. If you continue to ignore the massive cost and security nuances of the RAG vs fine-tuning an AI model debate, you will rapidly deplete your funding and compromise your user's trust.

Remember this foundational rule: Fine-tuning is for teaching an AI how to act, while RAG is for teaching an AI what to know.

Stop treating foundational models like static, deterministic software applications. By aligning your architectural choices with the realities of Agile sprint planning, you can build scalable, secure, and highly intelligent AI agents that actually drive bottom-line business value.

Frequently Asked Questions (FAQ)

RAG retrieves external, up-to-date information to answer queries, acting like a researcher reading a new document. Fine-tuning fundamentally alters the AI's internal weights by training it on new data, effectively changing its permanent core behavior and tone.

Choose RAG when your application requires access to dynamic, up-to-date enterprise data, or when factual accuracy is paramount. It is safer, cheaper, and faster to implement in sprints, effectively minimizing the risk of data leakage and AI hallucinations.

Fine-tuning costs can be exorbitant, requiring expensive GPU clusters, massive datasets, and extended sprint cycles. The costs are not just in computational power, but also in the specialized data engineering hours required to curate, format, and validate the training data.

While no system is perfect, RAG drastically reduces hallucinations. By forcing the AI model to synthesize answers strictly from retrieved, verified documents rather than relying on its internal, pre-trained memory, RAG grounds the output in factual, controllable enterprise data.

Fine-tuning permanently bakes your proprietary data into the model’s weights. If an unauthorized user skillfully prompts the model, it can inadvertently regurgitate sensitive corporate secrets. Furthermore, removing specific data points later to comply with privacy laws is incredibly difficult.