Your Agentic AI in Finance Will Fail

- Deterministic vs. Probabilistic Planning: Traditional Sprint Planning fails because it treats AI agent development as linear software rather than iterative experimental research.

- The Data-First Dependency: 40% of Agentic AI projects will fail by 2027 due to legacy data silos; Sprints must prioritize the "data spine" before agent logic.

- Validation Over Velocity: Success is measured by the agent’s autonomous reasoning accuracy and guardrail compliance, not just story points completed.

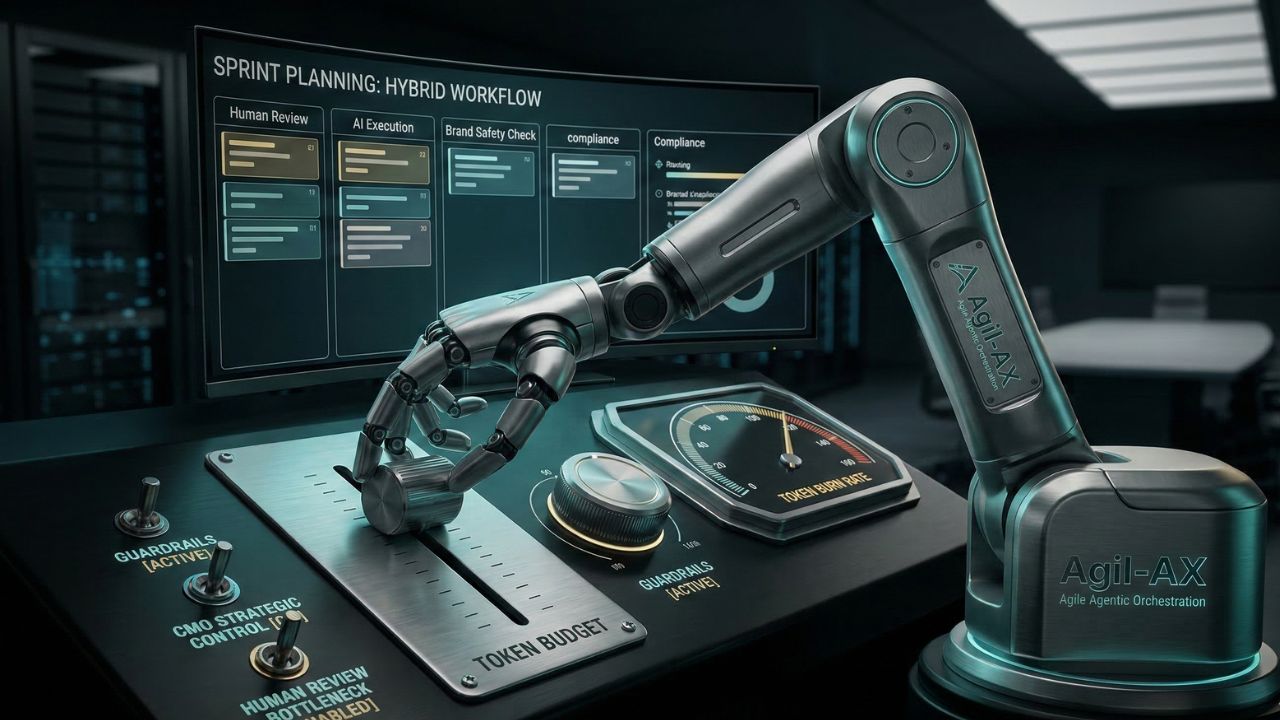

- Human-in-the-Loop (HITL): Effective planning must define explicit hand-off points where autonomous agents require human oversight.

The financial sector is currently rushing to deploy autonomous agents, but most are simply building glorified chatbots instead of exploring true agentic ai use cases. If you apply standard Scrum rituals to agentic ai in finance without modification, your project will inevitably stall.

Modern enterprise delivery requires a shift from managing tasks to managing autonomous behaviors and multi-agent system communication. The stakes have never been higher for banking infrastructure.

The Core Challenge: Why Standard Sprints Kill Agentic Innovation

Traditional Sprint Planning assumes the team is building a known feature with predictable outcomes. In the realm of agentic AI, you are building an entity that makes complex decisions dynamically.

In finance—where a single errant API call can trigger a regulatory nightmare—the stakes for planning are significantly higher. Most firms fail because they treat an AI agent like a standard microservice.

However, agentic workflows require a "data spine" that breaks down silos before the first line of agent logic is even written. Without this, your agent is effectively "flying blind" in a complex financial ecosystem.

Defining the Agentic Definition of Ready (DoR)

In a typical finance sprint, a story is ready when requirements are clear. For agentic ai in finance, the Definition of Ready (DoR) requires a fundamentally different approach.

- Data Lineage Access: Does the agent have real-time access to the specific financial datasets (e.g., Bloomberg terminals, internal ledgers) required?

- Constraint Parameters: Are the regulatory guardrails and "kill switches" defined for the specific autonomous task?

- Evaluation Dataset: Do we have a "golden set" of expected outcomes to test the agent’s reasoning against?

Architecting the Sprint: Multi-Agent Coordination

When planning for agentic systems, you aren't just planning for one "bot." You are often orchestrating multi-agent ai systems where specialized agents must communicate effectively.

For example, one agent designed for risk assessment and another for trade execution must pass data back and forth seamlessly without manual triggers.

The Integration of SLMs and LLMs

During your planning session, the team must decide if a task requires a Large Language Model (LLM) or a specialized Small Language Model (SLM).

For sensitive financial data, utilizing SLMs within a "Sovereign AI Ecosystem" ensures data sovereignty and compliance without relying on external global compute.

Measuring Success: Beyond Velocity and Burndown

In a world of autonomous agents, "velocity" is a vanity metric. If an agent completes 20 stories but hallucinates a risk assessment, the velocity is entirely irrelevant.

You must adopt metrics that matter in Agentic Sprints:

- Reasoning Accuracy: How often does the agent arrive at the correct financial conclusion compared to the "golden set"?

- Autonomous Execution Rate: The percentage of tasks the agent completes without triggering a "Human-in-the-Loop" intervention.

- Latency vs. Accuracy Trade-off: In high-frequency finance environments, the time an agent takes to reason is a critical sprint constraint.

Managing the Agentic Reality Check

As Gartner warns, over 40% of these projects will fail by 2027 because legacy systems cannot support the execution demands of modern AI.

During Sprint Planning, the Scrum Master and Product Owner must act as "Realism Officers." If the sprint goal includes "Autonomous Portfolio Rebalancing," but the backend systems still require manual batch processing, the agent is doomed.

You must plan for "cross-functional orchestration," ensuring the agents can manage processes across billing, support, and account management without manual handoffs.

Security and Compliance Guardrails in the Sprint

Every sprint involving agentic ai in finance must have a dedicated security sub-task. You are building systems that can potentially execute API calls without human approval.

Your Required Planning Checklist must include:

- Prompt Injection Testing: How will we stress-test the agent's resistance to malicious inputs?

- Permission Scoping: Does the agent have "least privilege" access to financial APIs?

- Audit Trail Implementation: Every decision made by the agent must be logged in a human-readable format for regulatory compliance.

Conclusion: Orchestrating the Future of Finance

Sprint Planning for AI agents is not about managing a backlog; it’s about managing an evolving intelligence. By focusing on data spines, multi-agent communication, and strict regulatory guardrails, you can avoid the "agentic reality check" that will sideline your competitors.

The transition to agentic operations requires moving from executing tasks to orchestrating and monitoring AI systems.

Start small, prioritize security, and ensure your human-in-the-loop safeguards are unbreakable before scaling these systems across the enterprise.

Frequently Asked Questions (FAQ)

The most profitable use cases involve autonomous risk management and real-time supply chain orchestration. By 2026, agents that can dynamically solve disruptions and optimize cash flow across cross-functional departments will provide the highest ROI.

Traditional GenAI is reactive, producing content based on prompts. Agentic AI is proactive and autonomous; it uses reasoning frameworks to break down goals into tasks and executes them via API calls without constant human input.

Primary risks include data leaks, prompt injection, and unauthorized API execution. Because agents act independently, improper guardrails can lead to severe regulatory non-compliance and financial loss in enterprise environments.

Safeguards are built by defining confidence thresholds. If an agent’s certainty falls below a specific percentage or a transaction exceeds a set limit, the system must require human approval before proceeding.

Sources and References

- Gartner Research: The Agentic Reality Check 2027

- Enterprise AI Architecture Journal: Building the Data Spine for Autonomous Agents

- Modern Scrum Alliance: Iterative Planning for Non-Deterministic Systems