How to Do Sprint Planning for AI Agents: The Agile Framework

Key Takeaways:

- Redefine Story Points: Shift from human effort-based estimation to specification-complexity scoring for AI tasks.

- Isolate Agent Capacity: Treat AI coding agents as parallel workstreams with dedicated velocity metrics, rather than replacing human capacity outright.

- Rigorous Specifications: You can't just throw a vague Jira ticket at an autonomous coding agent and expect enterprise-grade software.

- Mandatory QA Loops: Bake human-led code review and automated testing into the Definition of Done (DoD) for every AI-assigned ticket.

- Avoid Tool Shock: Integrate agents gradually, as forcing new AI IDEs onto your engineering team without a transition plan guarantees a drop in velocity.

If your engineering team is transitioning to the next generation of software development, you already know that the right agentic ide for agile software development cuts sprint time by 40%.

However, achieving that massive leap in productivity requires fundamentally changing how you run your Scrum ceremonies. Figuring out how to do sprint planning for AI agents is the biggest hurdle modern engineering leaders face.

Traditional agile frameworks rely on human effort, emotional intelligence, and implicit codebase context. When you introduce autonomous actors into the mix, standard capacity planning breaks down.

To successfully leverage these tools—and effectively utilize Agentic IDEs: Cut Agile Dev Cycles by 40%—you must adapt your backlog, your user stories, and your definitions of success. This deep dive will show you exactly how to calibrate your next sprint for a hybrid human-AI engineering team.

Rethinking the Backlog: How to Do Sprint Planning for AI Agents

When preparing for a sprint, the Product Owner and Scrum Master typically look at historical human velocity. AI agents completely disrupt this baseline.

An AI agent doesn't suffer from fatigue, but it does suffer from context window limitations and prompt ambiguity. To plan effectively, you must segment your backlog into AI-Ready and Human-Required tasks before the sprint planning meeting even begins.

Identifying AI-Ready User Stories

Not all tasks are created equal. Agents excel at specific types of work, while failing spectacularly at others. During backlog refinement, tag issues based on their suitability for autonomous completion.

- High AI Suitability: Boilerplate generation, unit test coverage, standardized API integrations, and translating clear business logic into functions.

- Moderate AI Suitability: Refactoring well-documented legacy code, UI component creation from strict design tokens.

- Low AI Suitability: Highly abstract architectural decisions, resolving undocumented monolithic technical debt, and nuanced UX problem-solving.

The New Role of the Product Owner

The role of a product owner in agentic coding is shifting from managing stakeholder expectations to becoming a highly technical systems specifier. Vague acceptance criteria are the enemy of AI velocity.

Product Owners must now ensure that tickets assigned to agents contain mathematically precise acceptance criteria.

If you are currently migrating agile development teams to agentic ides, training your Product Owners on prompt-engineering principles is the critical first step.

Redefining Story Points and Velocity

The Fibonacci sequence (1, 2, 3, 5, 8...) in Agile represents effort, complexity, and uncertainty. How do you assign a "5" to a task that an AI can generate in 45 seconds, but might take a human 2 hours to review and debug?

Transitioning to Specification-Complexity Scoring

When learning how to do sprint planning for AI agents, you must decouple code generation time from the actual story point value. Instead, point the ticket based on the verification effort.

- Generation Time: Near zero.

- Prompting Effort: The time it takes a senior engineer or PO to write the perfect specification.

- Review & QA Effort: The time required to validate the agent's output against the codebase architecture.

Your new velocity metric for AI agents should track the number of successfully merged and deployed automated pull requests, rather than raw lines of code or traditional story points.

Stop the code churn—see the 2026 IDE matrix to understand which tools actually yield mergeable code.

Structuring the Sprint Planning Ceremony

Integrating AI into your standard two-week sprint requires a modified agenda for your planning ceremony. You can no longer just ask developers what they want to pull from the top of the backlog.

Step 1: Human Capacity vs. Agent Capacity

Begin the meeting by defining your human capacity (vacations, holidays, core hours). Then, define your Agent Capacity. Does your tier allow for 50 autonomous runs per day? Are you limited by API costs?

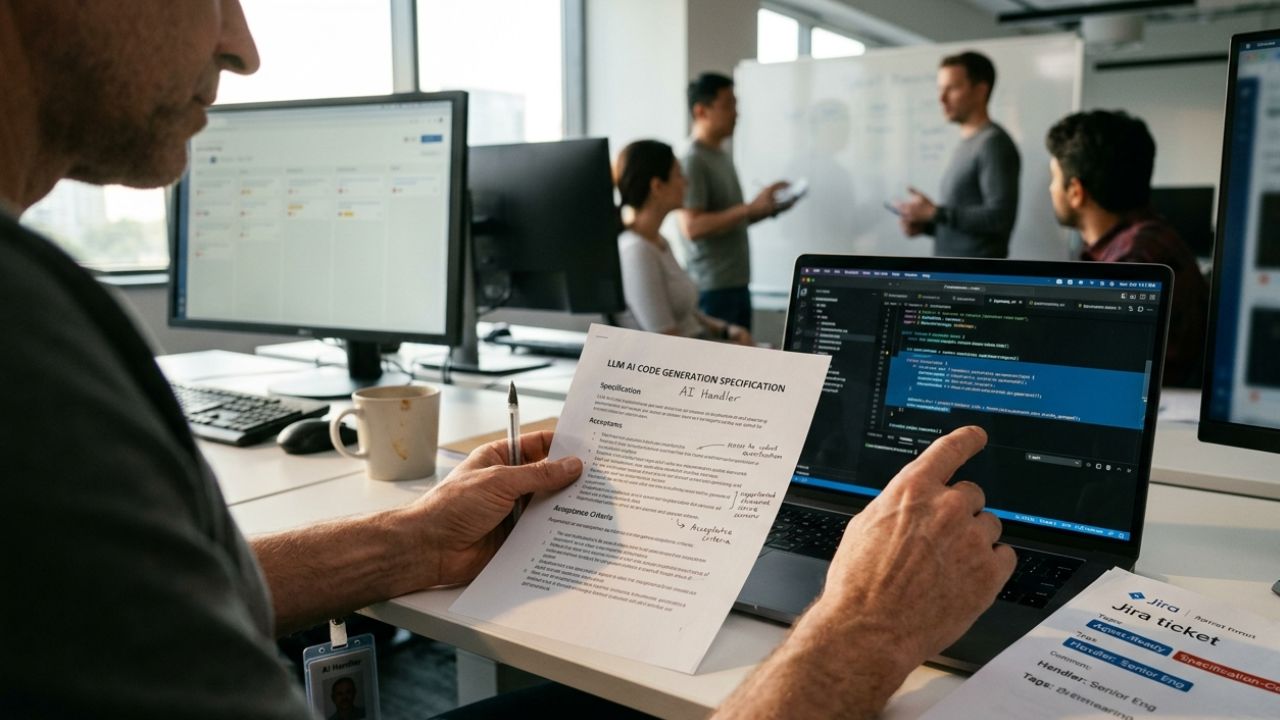

Establish a "budget" for AI agent attempts for the sprint. Treat the AI as a junior developer that requires pairing. Assign a senior engineer as the "handler" for specific AI-assigned tickets.

Step 2: The Specification Handshake

For every ticket pulled into the sprint for an AI agent, the team must agree on the llm ai code generation specification devin or your tool of choice.

The handler must confirm:

- Does the agent have access to the right repository context?

- Are the exact input/output parameters defined?

- What existing test suites must pass before the human reviews it?

Master the rigorous specification framework required to drive Devin AI or similar tools to ensure these handshakes are foolproof.

Step 3: Mapping Dependencies

AI agents work incredibly fast, which means they can create bottlenecks downstream. If an agent completes a massive API refactor on Day 1, your QA team might be overwhelmed on Day 2.

Map dependencies carefully. Stagger AI task execution so that human code reviewers have a steady, manageable stream of automated PRs to evaluate throughout the sprint, rather than a massive dump on Friday afternoon.

Updating Your Definition of Done (DoD)

Perhaps the most critical adjustment in how to do sprint planning for AI agents is updating your team's Definition of Done. Do we need new Definition of Done (DoD) for AI code?. The answer is an absolute yes.

The AI-Specific DoD Checklist

Code generated by an LLM inherently carries different risks than human-written code, specifically regarding hallucinations and secure coding practices. Your new DoD must explicitly mandate:

- Zero-Hallucination Checks: The code does not reference non-existent libraries or fabricated internal APIs.

- Security Scanning: Mandatory automated SAST/DAST scanning before human review.

- Test Coverage: The agent must write comprehensive unit tests for its own logic, achieving a minimum of 85% coverage.

- Human Sign-Off: At least one Senior Engineer must manually review the PR, verifying architectural alignment.

By enforcing these strict boundaries, you prevent technical debt from compounding at machine speed.

Managing Sprint Execution and Standups

Once the sprint begins, the daily standup must also evolve. Can Devin AI participate in a daily standup?. While the AI itself won't speak on your Zoom call, its assigned "handler" must report on its behalf.

The Handler's Daily Update

The human developer overseeing the agent should structure their standup update as follows:

- What the Agent Accomplished: "The agent successfully generated the authentication middleware."

- What the Human is Doing: "I am currently reviewing the agent's PR and resolving a minor type-safety issue it hallucinated."

- Blockers: "The agent is blocked because the Jira ticket lacked the updated database schema."

This keeps the entire Scrum team aligned and ensures the autonomous workflows remain fully transparent.

Frequently Asked Questions (FAQ)

Agentic IDEs shift agile development from a purely manual coding process to a system of architectural orchestration. Developers spend less time typing syntax and more time reviewing logic, significantly increasing overall sprint velocity and altering standard capacity metrics.

Integrate agents by treating them as specialized team members assigned to specific, well-defined backlog items. Require human developers to act as "handlers" who write the initial prompts, review the generated pull requests, and report on the agent's progress during daily standups.

While autonomous agents cannot physically speak in meetings, they are integrated into standups via their human handlers. The handler reports on the agent's task status, any blockers encountered during generation, and the time required for human code review.

Yes, updating the Definition of Done is strictly required. AI-generated code must pass distinct criteria, including mandatory security scans for hallucinated dependencies, automated unit test generation, and strict manual code review by a senior human engineer before merging.

The Product Owner transitions from writing general user stories to crafting highly technical, precise system specifications. They must ensure Jira tickets contain rigorous acceptance criteria, exact data models, and edge-case definitions so the AI can execute without ambiguity.

Conclusion

Mastering how to do sprint planning for AI agents is the defining leadership skill of the current software era. By updating your Definition of Done, transitioning to specification-based story pointing, and rigorously managing your backlog, you can harness the raw speed of autonomous agents without sacrificing enterprise reliability.

Treat your AI tools with the same structured discipline you apply to your human engineers, and your agile velocity will inevitably soar.