The Devin AI Specification Framework Exposed: Mastering the llm ai code generation specification devin

Key Takeaways:

- Vague Inputs Fail: You can't just throw a vague Jira ticket at an autonomous coding agent and expect enterprise-grade software.

- Prompting is Architecture: Bad prompts equal bad code. Your sprint planning must shift from estimating human effort to engineering strict architectural prompts.

- Context Boundaries: The secret to preventing hallucinations is mathematically defining the agent's file access and API limitations before the sprint begins.

- TDD is Mandatory: Autonomous agents must write failing tests based on your acceptance criteria before generating a single line of application logic.

- The PO Evolution: Product Owners must transition from writing user stories to crafting deterministic system specifications.

Bad prompts equal bad code. If you are transitioning your engineering department to an autonomous workflow, you already know that adopting Agentic IDEs: Cut Agile Dev Cycles by 40%.

However, that massive boost in velocity is entirely dependent on how you feed information to the machine.

Sprint planning for human developers relies on shared context, implicit knowledge, and verbal clarification. AI agents possess none of these.

To successfully integrate these tools into your agile cadence, you must master the exact llm ai code generation specification devin uses to autonomously resolve Jira tickets.

This deep-dive exposes the rigorous specification framework required to drive Devin AI and prevent catastrophic logic flaws from destroying your codebase.

The Core Problem: Why Agile Fails Autonomous Agents

When traditional agile teams attempt to integrate autonomous software engineers, they usually fail at the backlog refinement stage.

A standard Jira ticket often reads: "As a user, I want to reset my password so I can regain access to my account."

To a human engineer, this implies creating an email token, updating the database schema, building a frontend form, and writing integration tests. To an AI, this vague request is a recipe for disaster.

The Cost of Hallucinated Logic

If an LLM lacks strict boundaries, it will hallucinate dependencies.

It might import a deprecated authentication library, rewrite your entire routing middleware, or expose sensitive database fields.

You must adopt specification-driven AI development. This methodology forces the agile team to define the technical implementation limits before the AI ever sees the ticket.

According to software engineering standards established by the IEEE regarding automated code generation, deterministic outputs require deterministic inputs. Your sprint planning must produce machine-readable specifications.

Mastering the llm ai code generation specification devin

To extract actual value from autonomous agents, you must structure your sprint backlog items using a highly specific framework.

The llm ai code generation specification devin requires a multi-layered approach to context setting.

Here is the exact framework elite teams use to construct their AI-assigned tickets.

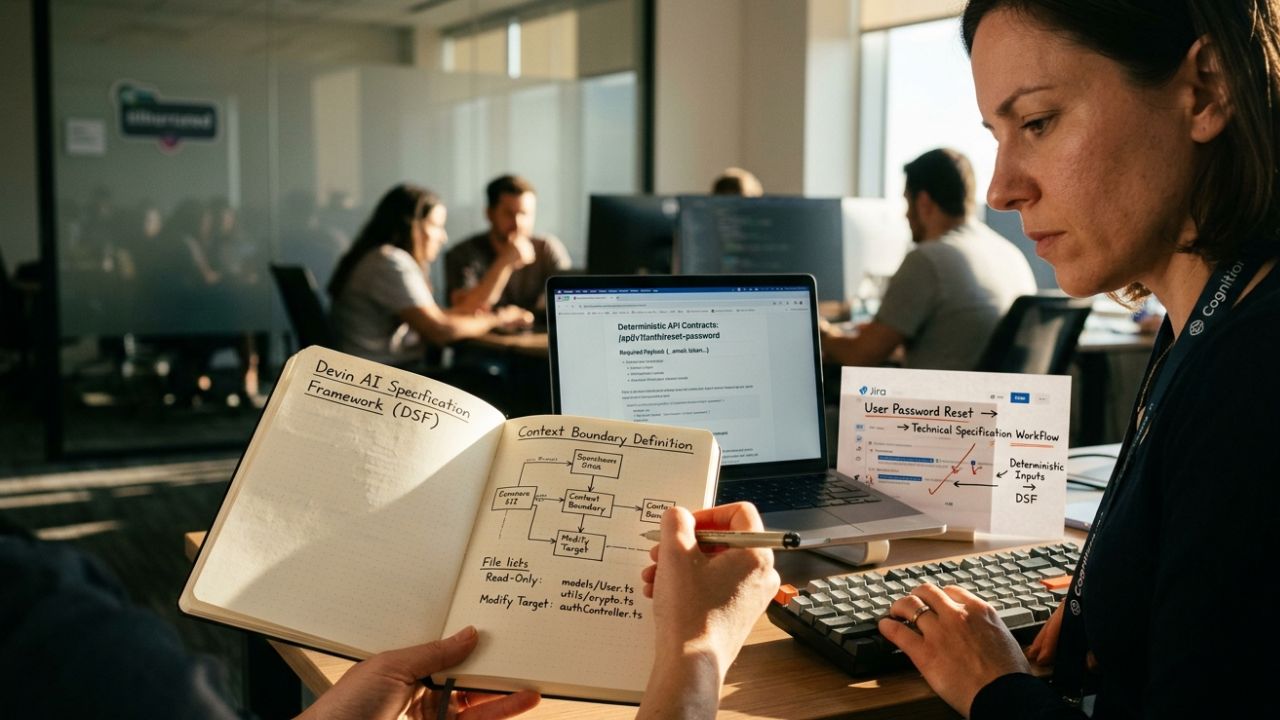

Layer 1: The Context Boundary Definition

Never give an autonomous agent access to your entire repository without boundaries.

You must explicitly define which files the agent is allowed to read and which files it is allowed to modify.

Example Specification Input:

- Read-Only Context: `/src/models/User.ts`, `/src/utils/crypto.ts`

- Modification Target: `/src/controllers/authController.ts`

- Prohibited Files: Do not modify any files in the `/src/legacy-billing/` directory.

By boxing the agent in, you drastically reduce the risk of cascading failures across undocumented monolithic architectures.

Layer 2: Translating Jira Tickets into LLM Prompts

How to translate Jira tickets into LLM prompts? You must convert user behavior into exact data contracts.

Instead of saying "build a reset password form," your specification must dictate the exact API request and response payload.

The Data Contract Requirement:

- Endpoint: `POST /api/v1/auth/reset-password`

- Required Payload: `{ "email": "string", "token": "string" }`

- Expected Response (200): `{ "message": "Password updated successfully." }`

- Expected Response (401): `{ "error": "Invalid or expired token." }`

When the agent has a rigid data contract, it writes code to satisfy the contract, eliminating creative guesswork.

The Role of a Product Owner in Agentic Coding

What is the role of a product owner in agentic coding? The role fundamentally shifts from user empathy to technical orchestration.

Product Owners (POs) can no longer rely on developers to fill in the architectural blanks during a sprint.

From User Stories to System Prompts

The modern PO must understand database schemas, API limits, and system architecture.

During backlog refinement, the PO must collaborate directly with the Lead Engineer to attach technical documentation to every user story.

Acceptance Criteria as Code

Acceptance criteria must be written in a way that an AI can natively understand.

This often means providing the PO with templates that enforce Test-Driven Development (TDD) principles. The criteria must be binary: the code either passes the explicit assertion or it fails.

How Autonomous Coding Agents Test Their Own Logic

A core component of the Devin framework is self-verification. How do autonomous coding agents test their own logic?

They do it through a continuous, internal REPL (Read-Eval-Print Loop) environment. However, the agent only knows what to test if you tell it.

Mandating AI-Driven TDD in the Specification

Your sprint specification must demand that the AI writes the test suite before it alters the application code.

Required Prompt Injection:

"Before implementing the authController logic, generate a Jest test suite in authController.test.ts. The suite must mock the database layer and assert that an expired token returns a 401 status. Run the tests to confirm they fail. Only proceed to implementation after the failing tests are committed."

If your organization struggles with this testing pipeline, you must deeply understand how to automate qa testing with cursor ai.

This parallel workflow is essential for keeping your CI/CD pipelines green when agents are pushing code rapidly.

Reviewing Autonomous AI Code Generation

How to review autonomous AI code generation? You do not review the syntax; you review the architectural alignment.

When a human developer reviews Devin's pull request, they should check the agent's work log. Did the agent attempt to install an unauthorized npm package? Did it modify a file outside of its Context Boundary?

If the agent violated the initial specification, the PR must be rejected immediately.

Devin vs. Copilot Workspace in Agile Sprints

Engineering leaders constantly ask: What is the difference between Devin and Copilot Workspace? The difference lies in autonomy versus assistance.

The Copilot Workspace Paradigm

Copilot Workspace is highly interactive. It suggests a plan, the human edits the plan, and then it generates code file-by-file.

It requires constant human nudging and active IDE tabs to maintain context. It is an assistant that accelerates human typing speed.

The Devin Autonomous Paradigm

Devin operates as an independent actor. You provide the specification framework at the start of the sprint, and Devin executes it.

It reads documentation, spawns its own terminal, debugs its own compiler errors, and submits a finished pull request.

This level of autonomy is exactly why preventing AI hallucinations in code generation is so critical.

If your upfront specification is flawed, an autonomous agent will confidently build the wrong feature at lightning speed, wasting valuable sprint capacity.

Strategic Implementation for Your Next Sprint

To effectively harness these tools, you must restructure your Agile ceremonies.

The New Sprint Planning Agenda:

- Capacity Allocation: Treat your AI agent as a junior developer with infinite typing speed but zero domain knowledge.

- Specification Auditing: Dedicate 50% of the planning meeting to reviewing the data contracts, context boundaries, and test assertions within the Jira tickets.

- Dependency Mapping: Ensure the AI is not assigned tickets that require undocumented, tribal knowledge from legacy systems.

Recent analyses by top software management consultancies indicate that teams dedicating more time to specification design upfront experience a 60% reduction in AI-generated technical debt.

Frequently Asked Questions (FAQ)

Devin AI processes specifications by analyzing strict data contracts, bounded file contexts, and explicit acceptance criteria. Unlike standard chatbots, it uses these parameters to autonomously navigate directories, read documentation, and write code that strictly adheres to the provided logical boundaries.

You translate them by stripping away user-centric narratives and replacing them with deterministic technical requirements. A standard ticket must be reformatted to include specific API endpoints, required JSON payloads, exact error codes, and explicit file modification limits before an agent can process it.

Copilot Workspace acts as an advanced assistant that requires constant human guidance and relies heavily on active IDE context. Devin is an autonomous agent that takes an upfront specification, manages its own terminal, debugs its own errors, and delivers a completed pull request independently.

The Product Owner transitions from writing empathetic user stories to designing highly technical, machine-readable system specifications. They collaborate with lead engineers to ensure every backlog item contains exact data contracts, architectural constraints, and testable acceptance criteria for the AI.

They utilize isolated environments to run automated tests. By enforcing Test-Driven Development (TDD) in the initial specification, the agent is instructed to write failing unit tests first, implement the application logic, and autonomously debug the code until all self-generated tests pass successfully.

Conclusion

Integrating autonomous workflows into your agile cycles is not about writing faster; it is about thinking clearer.

By adopting the llm ai code generation specification devin, you transition your engineering team from manual coders to architectural orchestrators.

Stop relying on implicit human context to carry your sprints. Build rigorous, mathematically sound prompt frameworks, define your data contracts upfront, and watch your agile velocity securely skyrocket.