Why Distributed AI Architecture Will Break Your Sprint

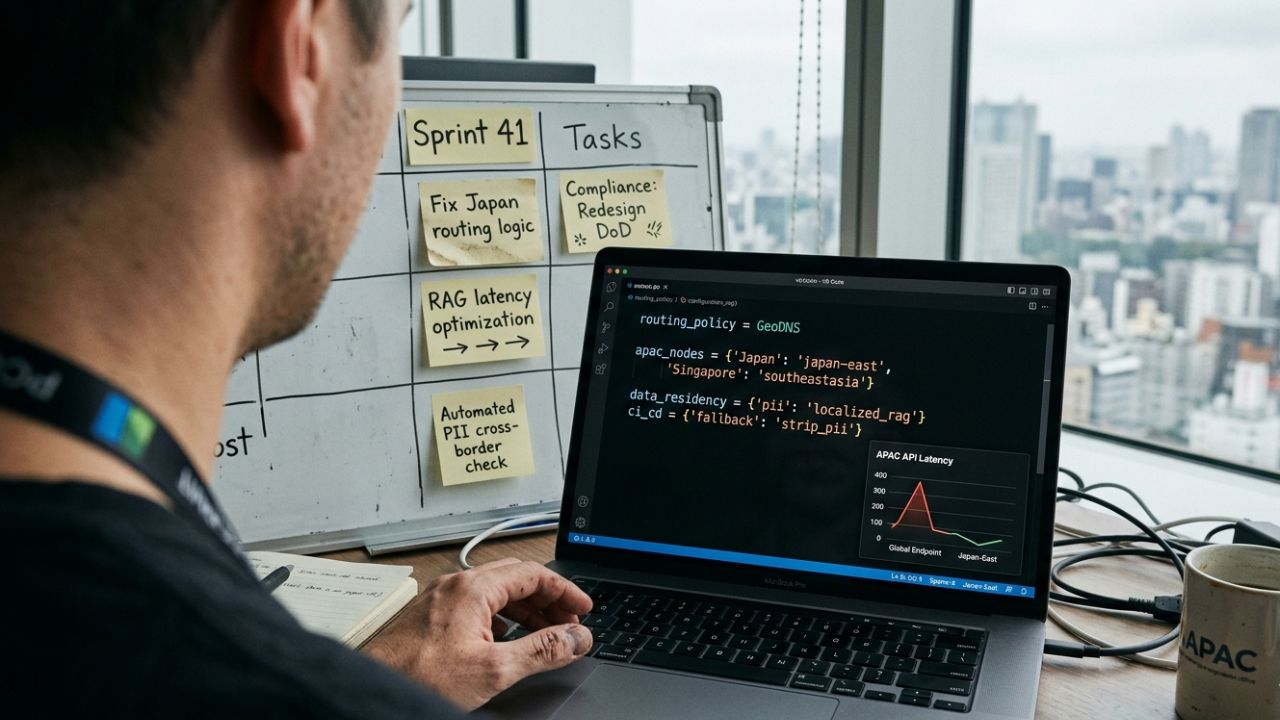

With Microsoft's localized compute expansion in Japan, software engineers and architects must pivot to building distributed AI architecture for APAC. This requires adapting CI/CD pipelines for multi-region deployment, ensuring stringent data residency compliance, and optimizing API routing to slash latency across Asian nodes.

For years, software development teams in the Asia-Pacific region have operated under a silent compromise. We built incredible applications, but we accepted that our AI models lived across the ocean. Every API call, every natural language prompt, and every complex data inference had to travel thousands of miles to server farms in the United States. This architectural debt is no longer sustainable.

Following Microsoft's $10B Japan investment, the game has fundamentally changed. The sudden availability of hyperscale compute directly within the APAC grid means that latency is no longer an excuse. However, this massive infrastructure push forces software architects to completely rethink how they manage cross-border data residency and rebuild their CI/CD pipelines.

The End of US-Centralized AI Endpoints

Historically, when an engineering team in Singapore, Sydney, or Mumbai spun up an enterprise AI application, the default configuration pointed to `us-east-1` or `us-west-2`. It was the path of least resistance. The documentation was clearer, the models were updated faster, and the infrastructure was perceived as more robust.

This reliance created a massive latency bleed. A 200-millisecond round trip might be acceptable for a background batch process, but it is fatal for real-time conversational agents, autonomous trading algorithms, or edge-based IoT analytics. The user experience degradation is palpable, and the financial cost of keeping network connections open over those distances is staggering.

The decentralization of compute power means the US-centralized endpoint is officially obsolete for APAC enterprise software. If your software team isn't routing AI queries locally, you are not only bleeding latency, but you are also actively violating emerging data sovereignty laws. The shift requires a total architectural teardown of how your application handles payload transmission.

Building Distributed AI Architecture for APAC Teams

Transitioning away from a single, monolithic AI endpoint requires a fundamental shift in how developers think about state, memory, and routing. Building distributed AI architecture for APAC teams is not merely changing a URL in an environment file. It involves re-engineering the application layer to be geographically aware.

When you deploy an application across multiple regions, your Large Language Models (LLMs) must be localized. This means maintaining model parity across different geographical nodes. If a developer pushes a fine-tuned update to a model, that update must propagate seamlessly across the Japan node, the Indian node, and the Australian node without causing version conflicts.

Furthermore, state management becomes exponentially more complex. If a user travels from Tokyo to Singapore, their session data and context window must either follow them dynamically or be reconstructable at the edge. This demands a robust synchronization strategy that many legacy applications simply do not possess.

Optimizing API Latency with Localized Nodes

The primary advantage of this infrastructure shift is speed. To truly optimize API latency, software teams must implement intelligent routing layers. Geo-DNS and advanced API gateways become critical components. When a user in Manila triggers an AI action, the system must instantly calculate the fastest, most compliant node—likely in Japan—and route the payload accordingly.

This also requires sophisticated fallback mechanisms. If the primary localized node experiences an outage or a massive traffic spike, the routing logic must gracefully degrade. It should reroute the query to the next closest node, or perhaps strip the payload of sensitive data and send it to a global endpoint, all within milliseconds.

Navigating Data Residency in Your CI/CD Pipeline

Perhaps the most daunting challenge for DevOps engineers is integrating data residency compliance directly into the CI/CD pipeline. You can no longer push a universal build to all regions simultaneously if those regions have conflicting laws regarding data storage and processing.

Japan's regulations differ from India's, which differ wildly from the GDPR standards applied in European nodes. Your continuous integration pipeline must now include automated compliance checks. Before code is deployed, the pipeline must verify that the infrastructure-as-code (IaC) templates explicitly forbid the transfer of Personally Identifiable Information (PII) across restricted borders.

This is where concepts like an offshore agentic RAG deployment architecture become vital. Retrieval-Augmented Generation systems must be built so that the sensitive vector database remains strictly localized, even if the general LLM processing is handled in a neighboring country. Your CI/CD tools must enforce this separation programmatically.

How Scrum Masters Must Adapt Sprints for Regional Compliance

This architectural revolution will inevitably impact your agile workflows. Scrum Masters and Product Owners must prepare for a significant hit to initial sprint velocity. You are no longer just building features; you are building compliant, multi-region distributed systems.

The "Definition of Done" must be rewritten. A feature is no longer done when it works on the developer's local machine or a centralized staging server. It is only done when it has been successfully tested across simulated multi-region deployments, proving that data does not leak across borders and that latency targets are met at the edge.

Backlogs must be heavily populated with infrastructure hardening tasks. Scrum Masters must advocate for dedicated time to refactor legacy API calls, update environment variable management, and test Geo-DNS routing logic. Pretending that the $10B shift in compute infrastructure won't break your sprint planning is a recipe for catastrophic deployment failures.