Nvidia Redefines AI TCO: Why Cost Per Token is the Only Metric That Matters

The End of FLOPS: Datacenters Evolve Into AI Token Factories

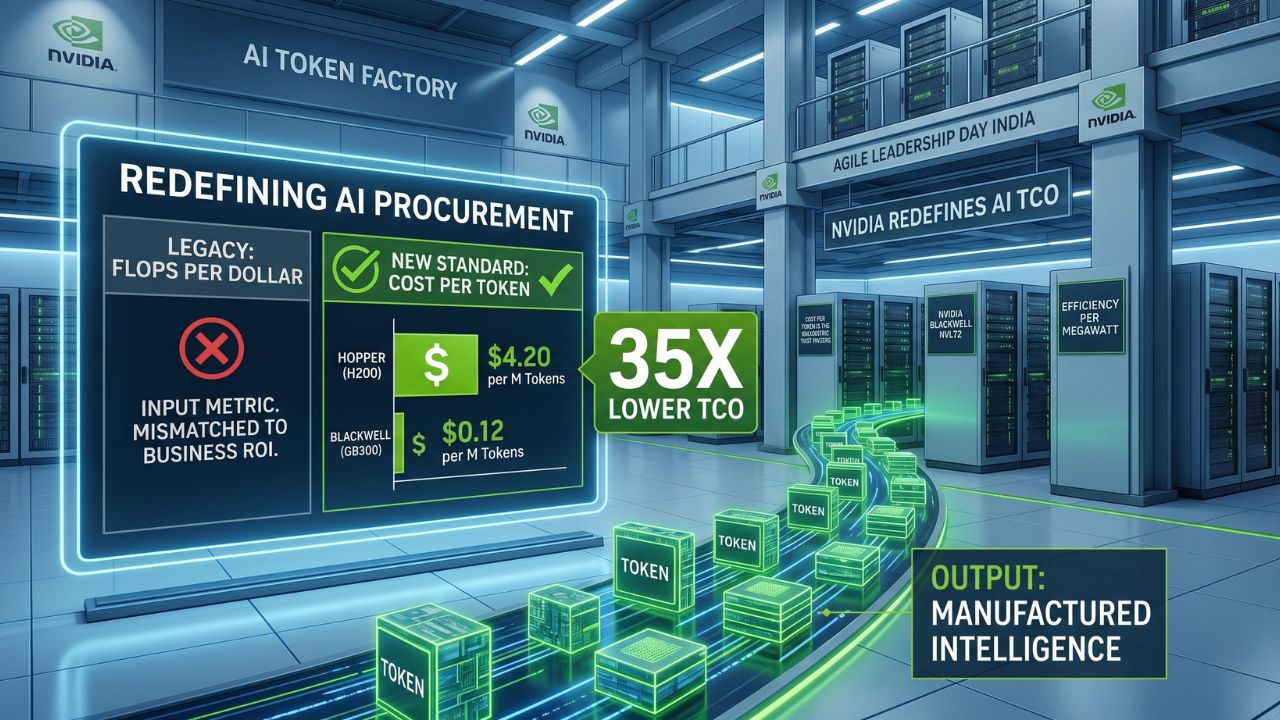

Nvidia has officially declared that evaluating AI hardware by "FLOPS per dollar" or raw compute cost is a fundamentally flawed approach for the modern enterprise. In the generative and agentic AI era, legacy data centers have rapidly evolved into "AI token factories." Because AI inference is now their primary workload, their true output is manufactured intelligence, measured strictly in tokens.

Optimizing for raw compute costs (the input) while the business runs on token generation (the output) creates a catastrophic mismatch in ROI forecasting. Nvidia's latest benchmark data highlights this divide flawlessly. Looking at compute cost alone, the Blackwell platform (GB300 NVL72) costs $2.65 per hour—nearly double the $1.41 hourly rate of the Hopper (HGX H200) architecture.

However, looking at the actual delivered output changes the equation entirely. Blackwell delivers 6,000 tokens per second per GPU compared to Hopper’s 90, representing a massive 65x increase in throughput. For enterprise buyers, this translates to a plunge in the cost per million tokens from $4.20 down to just $0.12—an unprecedented 35x cost reduction.

Full-Stack Optimization: MoE Models and the "Inference Iceberg"

For software architects and developers, this paradigm shift demands a laser focus on maximizing the "denominator" of the cost equation: delivered token output. Nvidia refers to this as the "inference iceberg." While the hourly cost of a GPU sits visibly above the surface, the massive sub-surface factors dictating real-world token yield are driven by relentless algorithmic, hardware, and software codesign.

The true architectural value of Blackwell is unlocked when deploying large-scale mixture-of-experts (MoE) reasoning models, which dominate modern AI applications. Engineering teams must ensure their inference stacks are capable of handling the massive "all-to-all" network traffic that MoE models generate. Furthermore, developer workflows must actively integrate advanced serving layer optimizations.

Without utilizing FP4 precision, speculative decoding, multi-token prediction, and KV-cache offloading, the token output denominator collapses. For engineers building agentic AI, which requires ultralow latency and massive input sequence lengths, a "cheaper" GPU that fails to support these deep-stack optimizations will ultimately throttle application performance and drive up the cost per interaction.

The 35x ROI Shift: Enterprise FinOps and Global Compute Arbitrage

For CEOs, CTOs, and CFOs, this data proves that basing procurement strategies on peak theoretical FLOPS or CapEx is a financial trap. Buying lower-cost silicon that generates significantly fewer tokens per second actively destroys the profit margin on every AI feature your enterprise ships. Token economics is the only Total Cost of Ownership (TCO) metric that directly accounts for real-world software optimization and utilization.

This shift is particularly critical for Indian Global Capability Centers (GCCs) and offshore infrastructure hubs facing severe power constraints. Blackwell's architecture delivers 2.8 million tokens per second per megawatt, a 50x leap over Hopper’s 54,000 tokens. This allows power-capped physical data centers to manufacture drastically more intelligence within the exact same energy footprint, fundamentally altering the ROI of sovereign AI investments.

To survive this transition, enterprise FinOps teams must immediately pivot their reporting from cloud compute hours to granular token economics. Leaders looking to restructure their financial models should consult The CFO’s Guide to Agentic AI Costs: From "Cost per Token" to "Cost per Outcome" to ensure scaling generative AI directly correlates with expanding profit margins rather than runaway cloud bills.

Frequently Asked Questions

FLOPS per dollar only measures raw computational input, whereas cost per token measures the actual manufactured output of intelligence. Optimizing for inputs while your applications rely on outputs creates a false economy that obscures the true Total Cost of Ownership.

The Nvidia Blackwell GB300 NVL72 drops the cost per million tokens to $0.12, compared to $4.20 on the Hopper HGX H200. Despite a higher hourly operational cost, Blackwell achieves a 35x cost reduction by generating 65x more tokens per second.

Maximizing token output requires deep hardware and software integration. Key drivers include support for FP4 precision, speculative decoding, KV-cache offloading, and advanced interconnects capable of handling the "all-to-all" traffic required by large-scale mixture-of-experts (MoE) models.