Build Your Own LMArena: 7-Step Internal Eval Pipeline

A procurement-grade methodology decode anchored to the LMSYS/LMArena Top Models 2026 leaderboard — how to mirror LMArena's pairwise Bradley-Terry scoring on your private enterprise data in seven concrete steps.

- The public LMArena prompt distribution skews toward general chat. Models that rank #1 publicly routinely fall to #3-#5 on internal arenas tested with code-review, RAG, document-extraction, or customer-support prompts.

- Bradley-Terry scoring is the same maximum-likelihood algorithm LMArena uses — not average win rate. It accounts for who beat whom (transitive strength) and produces stable Elo even with unbalanced match counts.

- Anonymized blind A/B voting is methodology-critical, not optional polish. Non-anonymized internal evals consistently produce 8-15% rank-shift versus the same eval blinded.

- For a 5-model arena (10 pairwise combinations), 1,500-3,000 total votes typically yield 95% CIs below ±15 Elo on top-3 — sufficient for procurement decisions. Stop voting when CI width stabilizes, not when you hit a fixed number.

- Voter expertise is the single most important variable for vote quality. Domain experts who actually use the model output produce statistically meaningful rankings; generic SME volunteers produce noisy ones.

Public benchmarks fail on private data — and procurement teams that ship LLMs based on the public LMArena rank alone routinely watch their #1 pick fall to #3 or #5 once tested against their actual workload. The fix is not "more benchmarks." The fix is the same methodology LMArena itself uses, adapted to run on your private data.

This page is the procurement-grade decode of how to set up an internal chatbot arena: a private LLM evaluation pipeline that mirrors LMArena's pairwise blind voting plus Bradley-Terry scoring on your enterprise prompts and your real workloads. The output is an Elo-style internal leaderboard that reflects your specific procurement context — not someone else's.

The reference methodology we mirror throughout is published openly by LMArena. The live public leaderboard at LMArena leaderboard at lmarena.ai is the cross-reference for vote-pipeline best practices, CI thresholds, and the Bradley-Terry implementation we recommend.

Why an Internal Arena Beats the Public Leaderboard for Procurement

The single most expensive misreading in enterprise LLM procurement: treating the public LMArena rank as a procurement decision rather than a starting shortlist. Three structural reasons the public rank doesn't transfer:

- Prompt distribution mismatch. The public LMArena vote pool skews toward general chat, code, and creative writing. Your enterprise workload likely doesn't. Procurement rank derived from a vote pool that doesn't match your workload is procurement noise.

- Confidence intervals overlap aggressively at the top. Top-3 public Vision and Writing models routinely sit within overlapping 95% CIs — meaning the rank order is partially statistical noise. An internal arena with your specific voter pool produces tighter CIs on the rankings that actually matter.

- Vendor-brand bias. Non-blinded internal evaluations show 8-15% rank-shift versus blinded ones. Engineers favor familiar vendor brands and penalize unfamiliar ones, regardless of output quality. Only an anonymized A/B pipeline removes this contamination.

For the public-leaderboard procurement context that an internal arena should refine, see the live snapshot at Who's #1 on LMArena Right Now? The Live Top-10 Decoded.

The 7-Step Internal Eval Pipeline

Here is the procurement-grade methodology. Apply in order — each step builds on the one before, and skipping any step compromises the statistical defensibility of the final ranking.

Define the Prompt Distribution That Reflects Your Real Workload

Sample 200-500 representative prompts from production logs across your top workload categories — customer support, internal RAG, code review, document extraction, agentic loops. The prompt distribution is the single most important methodological choice you make. It determines what your leaderboard actually measures.

- Sample proportional to production volume, not feature breadth. If 60% of your traffic is customer support, 60% of your arena prompts should be customer support.

- Include 10-15% "edge case" prompts — the queries where models historically failed in your logs. These prompts disproportionately drive vote separation.

- Strip PII before voting begins. Internal arena prompts should be production-realistic but PII-free for compliance.

Select the Model Shortlist (3-7 Candidates Maximum)

Anchor on the public LMArena top-10 plus 1-2 open-weight candidates relevant to your TCO target. Keep the shortlist small. Beyond 7 models, the per-pairwise-comparison vote requirement explodes quadratically:

5 models → 10 unique pairs → ~1,500-3,000 votes total

7 models → 21 unique pairs → ~4,000-6,000 votes total

10 models → 45 unique pairs → ~9,000+ votes total

For most enterprise procurement, 5 models is the sweet spot — broad enough to test trade-offs, narrow enough to finish in 2-3 weeks of voting. For the open-weight candidate slot, see the licensing-and-procurement framework at Open-Source LMArena Rankings: 7 Models Closing the Gap.

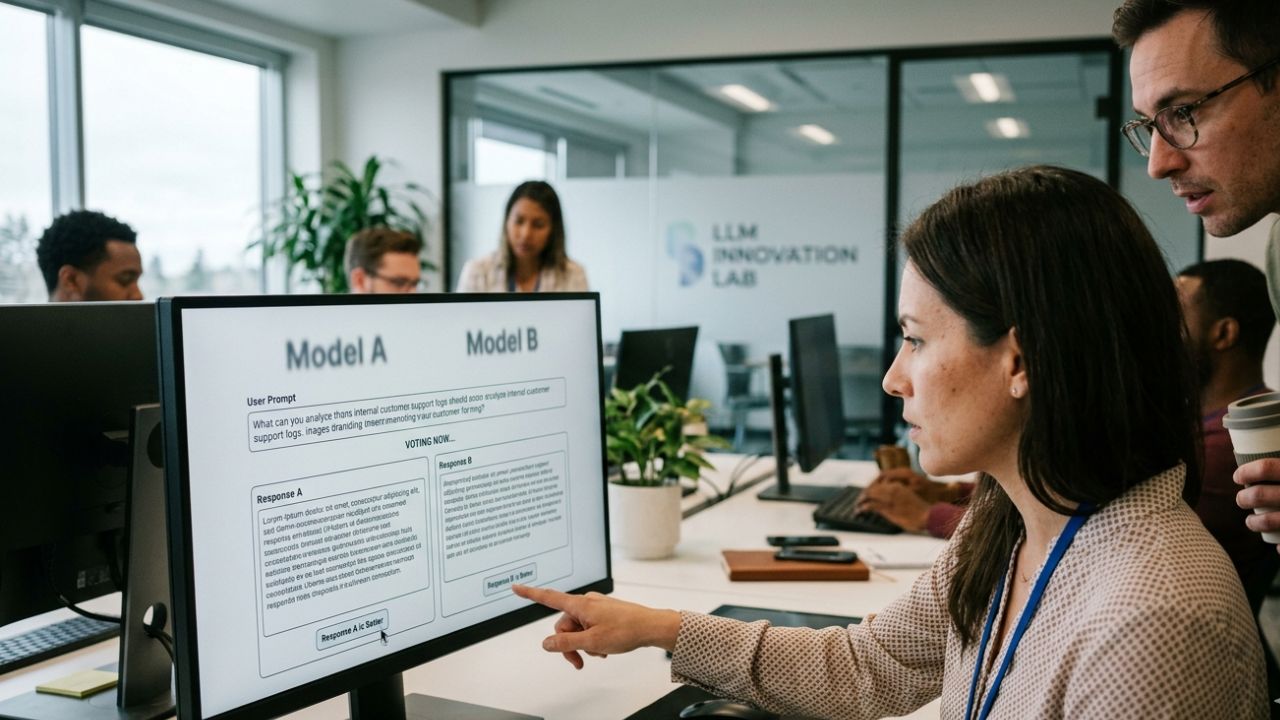

Build the Anonymized Pairwise Voting UI

Two side-by-side response panels labeled A and B with no model attribution visible during voting. Vote options:

- A wins — A is meaningfully better for this prompt

- B wins — B is meaningfully better for this prompt

- Tie — both are roughly equivalent in quality

- Both bad — neither is acceptable (recorded but excluded from Elo computation)

Hide model identity until the vote is recorded. The blind comparison eliminates vendor-brand bias that contaminates non-blinded internal reviews. UI hygiene that matters in practice: identical font, identical formatting, randomized A/B side-assignment per prompt, no telltale stylistic markers (one model's signature em-dash usage, another's preferred bullet style).

Implement Bradley-Terry Scoring (Not Average Win Rate)

Bradley-Terry is the same maximum-likelihood algorithm LMArena uses. It produces an Elo-style score that accounts for who beat whom (transitive strength), not just who won the most votes. Why this matters: under naive average win rate, beating a strong opponent counts the same as beating a weak one. Under Bradley-Terry, beating a strong opponent moves your score more.

- Use the

choixPython library or open-source Bradley-Terry implementations. Don't roll your own — the maximum-likelihood iteration is subtle and error-prone. - Compute 95% confidence intervals via bootstrap resampling (1,000 iterations is standard).

- Anchor the scale: set the median model to 1500 Elo. Without an anchor, the absolute scores drift between runs.

- Re-fit after every 100 new votes during active collection — the scores stabilize gradually, and watching them stabilize tells you when to stop voting.

Recruit 8-15 Internal Voters from Real Workload Stakeholders

Voters should be the actual users of the model output — engineers for code review, support reps for customer support, analysts for RAG over financial documents. Domain expertise is the single most important variable for vote quality. Generic "AI enthusiast" volunteers or general-purpose SMEs produce statistically noisy rankings.

- Target 8-15 voters per arena. Below 8, individual voter idiosyncrasies dominate. Above 15, recruitment overhead and scheduling friction outweigh the marginal accuracy gain.

- Each voter should commit to 100-200 votes over 2-3 weeks (10-20 per week is sustainable cadence).

- Track per-voter consistency: voters whose vote rate diverges sharply from the consensus on the same prompts should have their votes weighted lower or excluded.

- Compensate voters' time formally — internal arena participation should be a budgeted line item, not volunteered effort. Volunteered effort produces voter dropout mid-arena.

Run Until Confidence Intervals Stabilize (Typically 1,500-3,000 Votes)

Don't pre-commit to a fixed vote count. Track the 95% CI width per model, and stop voting when the CI for the top-3 models drops below ±15 Elo points. For a 5-model arena that typically requires 1,500-3,000 total votes over 2-3 weeks of active voting.

- Run a methodology sanity check at the halfway point: drop one model and verify rank order is stable. If dropping any single model flips the top-2, you don't have enough votes yet.

- Watch for vote-pool decay — if voter participation drops sharply mid-arena, the late votes are dominated by 2-3 voters and skew the result. Mitigate with weekly re-anchoring of voter participation.

- Check inter-rater agreement (Cohen's kappa) between voter pairs. Below 0.4, your prompt distribution is ambiguous; above 0.75, you may have leaked model identity.

Publish the Internal Leaderboard with Caveats and Re-Run Quarterly

The published leaderboard should include four mandatory disclosures: Elo scores, 95% CI width per model, prompt distribution summary, and voter pool size + composition. Skip any of those, and the leaderboard becomes politically weaponizable.

- Note explicitly that top-3 models within overlapping CIs are statistically tied — do not pick a single winner from a cluster.

- Re-run quarterly. Vendor model updates, your workload distribution, and voter pool composition all drift. Old assumptions decay quickly on multimodal arenas, slightly slower on text-only.

- Archive the prompt distribution and vote logs for each run. Quarter-over-quarter rank changes are only interpretable if the methodology is held constant.

- Treat the internal arena leaderboard as procurement input, not procurement output. Final model selection still considers latency, cost, licensing, and security review — the arena answers "which is highest-quality on our workload," not "which should we deploy."

Common Methodological Mistakes That Compromise Internal Arena Validity

The internal arenas we audit fail in five predictable ways. All of them are avoidable with the methodology above:

- Average win rate instead of Bradley-Terry. Produces an unstable rank that flips with each new vote batch. ~8-12% of rankings differ between the two methods on small vote counts.

- No anonymization or partial anonymization. Vendor-brand bias contaminates the result. Non-blinded evaluations show 8-15% rank-shift versus the same eval blinded.

- Generic SME voter pool. Without domain experts, vote quality is too noisy to produce statistically meaningful rankings — even with high vote counts.

- Fixed vote-count target. Stopping at "1,000 votes" because that was the plan, even when CIs are still wide, produces a leaderboard that's wrong with high confidence.

- No re-baseline cadence. A leaderboard run once at deployment becomes stale within 90 days. Without a quarterly re-run, procurement decisions are anchored to data that no longer reflects vendor capability.

For the model-selection deep-dive on the 3-way comparison (Claude vs GPT-5.2 vs Grok 4.20) that an internal arena typically refines, see Grok 4.20 vs Claude vs GPT-5.2 on LMArena: Coding Verdict.

Sprint Planning for Internal Arena Operations

Engineering teams shipping internal arenas under Agile/Scrum frameworks routinely under-budget two specific work streams. Concrete planning recommendations:

- Allocate dedicated capacity for prompt curation (Step 1). This is not a 30-minute task — budget 1-2 days of senior engineering time. The prompt distribution determines everything downstream.

- Treat 95% CI width as the acceptance criterion for arena completion, not vote count. Latency regressions in arena CI tracking should block the sprint, same as code regressions.

- Run a dedicated load-testing sprint for the voting UI itself if the voter pool is large. A buggy or slow vote UI corrupts the data.

- Re-baseline arena Elo every quarter. Add a recurring sprint capacity allocation. Vendor updates ship monthly; your assumptions decay quarterly.

- Track voter participation as a backlog metric alongside development velocity. Voter pool decay invalidates the arena.

For the procurement context on how to weight the resulting Elo against latency and cost in regulated B2B environments, see Grok 4.20 B2B Audit: Why The Elo Score Is a Trojan Horse.

The Bottom Line — Internal Arena as Procurement Input, Not Output

An internal chatbot arena is the most defensible procurement signal you can generate for an enterprise LLM decision. It is not, by itself, the procurement decision.

The procurement-grade read for AI platform teams in 2026:

- Treat the public LMArena rank as a starting shortlist, not a final answer. Run an internal arena before signing a procurement contract.

- Apply the 7-step methodology rigorously. Bradley-Terry scoring, anonymized blind voting, domain-expert voter pool, CI-stabilization stopping criterion. Each step is non-optional.

- Publish with caveats. Top-3 within overlapping CIs is a statistical tie. Final selection considers latency, cost, licensing, security, and the arena Elo together.

- Re-run quarterly. Vendor updates, workload drift, and voter pool composition all change. Old leaderboards decay fast.

Frequently Asked Questions (FAQ)

An internal chatbot arena is a private LLM evaluation pipeline that mirrors LMArena methodology — pairwise blind voting plus Bradley-Terry scoring — but runs on your enterprise data and your real workloads. The output is an Elo-style leaderboard that reflects your specific procurement context, not a public benchmark.

The public LMArena prompt distribution skews toward general chat. Your enterprise workload likely doesn't. Models that rank #1 on LMArena routinely fall to #3-#5 on internal arenas tested with code-review, RAG, document-extraction, or customer-support prompts. The public leaderboard is a starting shortlist, not a procurement decision.

For a 5-model arena (10 pairwise combinations), 1,500-3,000 total votes typically yield 95% confidence intervals below ±15 Elo points on the top-3 — sufficient for procurement decisions. For 7+ models, the vote requirement scales quadratically. Stop voting when the CI width stabilizes, not when you hit a fixed number.

Bradley-Terry is a maximum-likelihood algorithm that converts pairwise win/loss data into an Elo-style strength score. Unlike average win rate, it accounts for who beat whom — beating a strong opponent counts more than beating a weak one. LMArena uses it because it handles unbalanced match counts and produces transitive rankings.

The actual end-users of the model output. For code-review pipelines, software engineers. For RAG over financial documents, financial analysts. For customer support, support representatives. Domain expertise is the single most important variable for vote quality. Generic "AI enthusiasts" or volunteer SMEs produce statistically noisy rankings.

End-to-end timeline: 2-4 weeks for a 5-model, 8-15-voter arena with 1,500-3,000 total votes. Setup (prompt curation, UI build, voter recruitment) takes 1 week. Active voting takes 2-3 weeks at sustainable cadence (10-20 votes per voter per week). Analysis and publication takes 2-3 days.

Yes — non-anonymized internal evaluations consistently produce a 8-15% rank-shift versus the same eval blinded. Voters favor familiar vendor brands and penalize unfamiliar ones, regardless of output quality. The anonymized A/B panel pattern (no model name visible until after vote) is methodology-critical, not an optional polish.

Yes — and it should. Treat arena Elo as an acceptance criterion for model-swap decisions. Add sprint capacity for vote facilitation when launching new model evaluations. Track arena CI width as a backlog metric. Re-run the arena every quarter or whenever a frontier model ships a major version. Old assumptions decay quickly.

Three models, six voters, 600-900 total votes, run over 2 weeks. That's the minimum for procurement-defensible Elo with reasonable CIs. Below that, CI overlap makes rank order statistically meaningless. The smallest setup still requires Bradley-Terry scoring (not average win rate) and blind anonymization — those are not optional.

Same methodology with two adjustments: include image, document, or audio inputs in the prompt distribution; and increase voter pool size by 30-50% because multimodal preference voting has higher inter-rater variance than text-only. Latency-sensitive workflows should also track p95 latency alongside Elo as a secondary metric.

Sources & References

- LMArena (official) — Live LLM leaderboards and the canonical Bradley-Terry methodology reference.

- LMArena Leaderboard Changelog — Vote-pipeline methodology updates and CI threshold conventions.

- Chiang et al. (2024). "Chatbot Arena: An Open Platform for Evaluating LLMs by Human Preference." arXiv.

- choix — Open-source Bradley-Terry maximum-likelihood Python library.

- arena-ai-leaderboards JSON Feed — Public LMArena data for cross-reference baselining.

- Bradley-Terry Model — Reference algorithm derivation and bootstrap CI methodology.

- LMSYS Org — Original methodology research and community resources.