AI Safety Guardrails: 5 Steps to Stop Enterprise Leaks

- Audit Shadow AI: Map all unauthorized LLM usage across the network.

- Deploy an AI Gateway: Route all AI traffic through a centralized, monitored choke point.

- Implement Data Loss Prevention (DLP): Mask PII and proprietary code before it hits external models.

- Enforce Role-Based Access Control (RBAC): Limit model access based on employee clearance.

- Establish Continuous Red Teaming: Regularly attack your own systems to find prompt injection vulnerabilities.

Unregulated AI is a massive corporate risk. Your employees are already bypassing your basic AI filters to get work done, exposing your proprietary data. Moving Beyond the Bypass: The Enterprise Guide to AI Safety and Guardrails is vital.

This guide provides the definitive blueprint for Chief Information Security Officers (CISOs) and IT leaders to lock down generative AI deployments. We will move past superficial API wrappers and implement hard, architectural safeguards that protect intellectual property without strangling employee productivity.

Stop the leaks by implementing an enterprise-grade AI safety framework that actually works.

The Silent Crisis: The Corporate "Bypass" Epidemic

"Shadow AI" isn't a buzzword; it's your engineers bypassing restricted models to ship code faster. When internal systems are too slow or heavily restricted, high-performing teams will inevitably find workarounds.

"Jailbreaking" tools is common. Employees use personal accounts or unvetted third-party wrappers to process sensitive corporate data, completely outside the view of the IT department. Understand the risk of "bypassing" AI safety in corporate environments before your data is compromised.

The risk of "bypassing" AI safety in corporate environments is not just a theoretical concern; it is a direct pipeline to intellectual property theft and regulatory disaster. When a developer pastes a block of proprietary source code into a public LLM to debug an error, that code is absorbed into the model's training data.

Industry Warning: The False Sense of Security

Purchasing an "Enterprise" license for a popular LLM does not automatically grant you immunity from data leaks. If your endpoint security cannot detect and block unauthorized prompt injections or external model queries, your perimeter is already breached.

The Information Gain: The Illusion of the API Wrapper

What most organizations miss is the fundamental difference between a user interface (UI) wrapper and genuine algorithmic security. Many companies believe they are secure because they built a custom internal chat UI that connects to an LLM via API.

This is an illusion. An interface wrapper does not prevent prompt injection. It does not stop a malicious actor (or a careless employee) from tricking the underlying model into revealing its system prompt or bypassing its ethical constraints.

To achieve true security, guardrails must be placed at the infrastructure level, orchestrating the inputs and outputs before they ever reach the large language model. This requires intercepting the payload, sanitizing the vector embeddings, and running secondary "evaluator" models to check for compliance in real-time.

Navigating the Regulatory Minefield

Regulatory fines are scaling. Governments worldwide are moving aggressively to regulate generative AI, and ignorance of these frameworks is no longer a viable legal defense. If your compliance strategy treats the 2026 AI Act like just another GDPR update, you are preparing to fail.

Uncover the stark differences in AI Privacy Compliance: GDPR vs AI Act 2026 that will dictate enterprise software this year.

Mastering AI Privacy Compliance: GDPR vs AI Act 2026 requires a fundamental shift in how organizations handle data lineage. Unlike traditional software, where data is simply stored, AI models memorize and synthesize data.

If a customer exercises their "Right to be Forgotten," untangling their specific data points from a pre-trained neural network is cryptographically and mathematically complex. Enterprise leaders must implement systems that enforce data masking at the edge, ensuring PII never enters the model's context window.

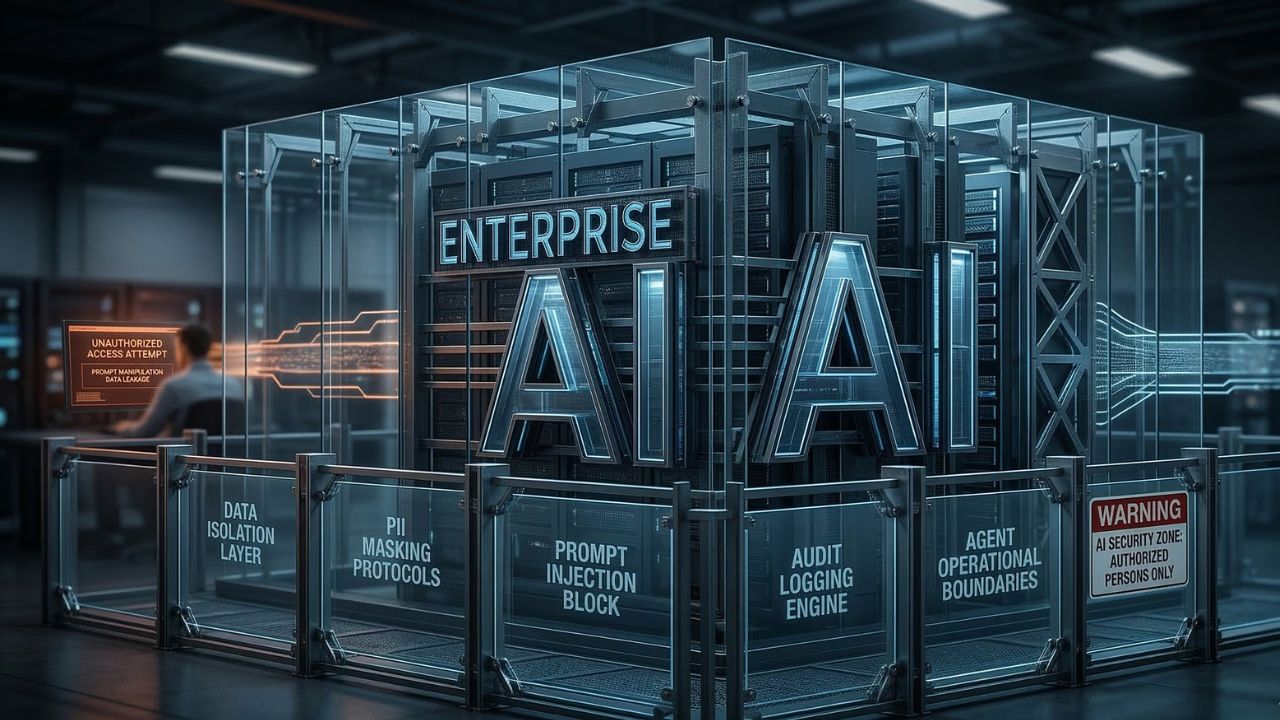

The 5-Step Enterprise AI Safety Framework

To transition from vulnerable to verified, organizations must implement a rigorous, five-step architectural framework.

Step 1: Discover & Map Shadow AI

You cannot protect what you cannot see. The first step is deploying network-level monitoring to detect unauthorized AI API calls. This involves analyzing DNS logs and endpoint telemetry to identify where employees are interacting with unvetted AI tools.

Once mapped, these shadow workflows must be rerouted to approved, secure internal AI gateways.

Step 2: Establish Agentic Constraints

Rogue agents destroy brand trust. As organizations move from simple chatbots to autonomous agents that execute tasks, the risk multiplies. Relying on default LLM safety settings for your autonomous agents is corporate negligence.

Knowing How to Build Ethical Guardrails for GenAI Agents prevents costly PR disasters.

How to Build Ethical Guardrails for GenAI Agents involves hard-coding operational boundaries. An agent tasked with cloud provisioning must have a hard-coded budgetary limit and require human-in-the-loop (HITL) sign-off for destructive actions like deleting databases.

Step 3: Implement an AI Trust Layer (Gateway)

Every enterprise needs a centralized AI Trust Layer. This acts as a reverse proxy between your employees and the LLMs (both cloud-based and local). This gateway performs three critical functions:

- Input Sanitization: Stripping out PII, API keys, and proprietary code before the prompt leaves your network.

- Prompt Injection Detection: Using specialized classifiers to identify malicious prompts designed to hijack the model.

- Output Verification: Scanning the LLM's response for hallucinations, toxic content, or unauthorized data disclosure before presenting it to the user.

Step 4: Continuous Red Teaming

Static security tests are useless against dynamic, generative models. Enterprise security teams must adopt continuous red teaming. This involves deploying automated adversarial models that constantly bombard your AI systems with thousands of edge-case prompts, attempting to force a failure or a jailbreak.

By automating this adversarial testing, organizations can patch vulnerabilities in their system prompts and guardrail logic before a real attacker exploits them. You should continually refine your approach by studying AI Privacy Compliance: GDPR vs AI Act 2026.

Step 5: Enforce Zero-Trust Architecture for LLMs

Zero-Trust principles must apply to generative AI. Do not assume that because a prompt originates from an internal employee, it is safe. Verify every interaction.

Integrate your AI gateway with your existing SSO (Single Sign-On) and RBAC systems. If a junior marketing associate queries the internal HR RAG (Retrieval-Augmented Generation) system, the guardrails must ensure the LLM only accesses documents that the associate has the clearance to read.

5 Steps to Stop Enterprise Leaks dictates that data authorization must happen at the retrieval phase, not the generation phase.

The Cost of Inaction

Failing to implement these guardrails is no longer just an IT issue; it is a board-level liability. The organizations that thrive in the coming decade will not be the ones that ban AI, but the ones that build the safest, most resilient infrastructure to harness it.

Stop relying on vendor promises and start engineering verifiable trust.

Frequently Asked Questions (FAQ)

Enterprise AI safety guardrails are architectural and programmatic controls placed between users and AI models. They actively monitor, filter, and sanitize inputs and outputs to prevent data leaks, prompt injections, hallucinations, and unauthorized access to corporate systems.

Bypassing safety filters, often called "Shadow AI," exposes proprietary source code, trade secrets, and customer PII to public models. This leads to intellectual property theft, severe regulatory compliance violations, and significant financial penalties under data protection laws.

The 2026 EU AI Act classifies AI systems by risk. Enterprises using high-risk LLMs must enforce strict data governance, algorithmic transparency, and human oversight. Non-compliance results in massive fines, fundamentally changing how businesses train and deploy internal models.

Prevent data leaks by deploying a centralized AI Gateway that acts as a reverse proxy. This layer uses Data Loss Prevention (DLP) to automatically mask PII and proprietary code in real-time before the prompt is sent to the LLM.

Auditing an AI agent requires logging every API call, decision tree, and action taken by the agent. Organizations must use specialized evaluation models to continuously test the agent against ethical frameworks and hard-coded operational boundaries to ensure compliance.