The Black Box Audit: Logging Decisions of Ephemeral AI Agents

In 2026, "Computer says no" is not a legally defensible defense. When your autonomous agent denies a loan application or deletes a customer account, you must explain why. But how do you audit a decision made by a "Swarm" of 50 agents that were created and destroyed in milliseconds?

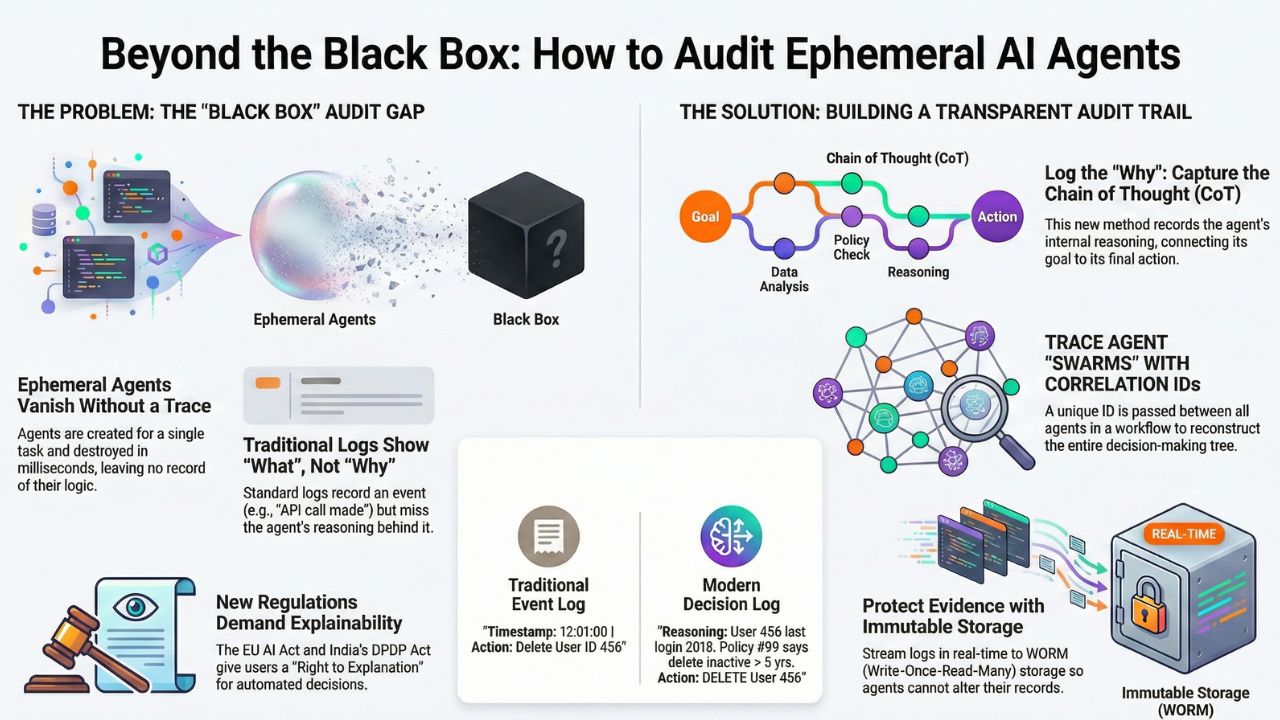

The rise of ephemeral agents has created a "Black Box" problem for compliance teams. Traditional server logs tell you what happened (an API was called). They do not tell you why it happened (the reasoning logic). This guide explains how to build an audit trail that satisfies the EU AI Act and India’s DPDP Act.

1. The Regulatory Requirement: Why Logs Matter

New regulations have fundamentally changed the requirements for logging.

- EU AI Act (Article 19): High-risk AI systems must automatically generate logs to ensure traceability of the system’s functioning throughout its lifecycle. These logs must catch situations that may result in substantial modification of the system.

- India DPDP Act (2023): Data Fiduciaries are responsible for the "completeness, accuracy, and consistency" of personal data processing. If an agent processes data erroneously, the fiduciary must prove they had safeguards in place. The penalty for negligence can reach ₹250 crore.

- Right to Explanation: Both frameworks implicitly or explicitly grant users the right to understand automated decisions. If you cannot produce the logic trace, you are non-compliant.

2. From Event Logging to Decision Logging (CoT)

To solve the Black Box, we must shift from infrastructure logging to Decision Logging. This means capturing the "Chain of Thought" (CoT) of the agent.

An Event Log looks like this: Timestamp: 12:01:00 | Status: 200 OK | Action: Delete User ID 456.

A Decision Log looks like this:

Agent ID: Cleanup-Bot-v9

Goal: Remove inactive accounts > 5 years.

Reasoning (CoT): "I have checked User 456. Last login was 2018. This is > 5 years. Policy #99 says delete. No active subscriptions found."

Action: DELETE User 456

Capturing this intermediate reasoning step is crucial. Without it, you cannot distinguish between a bug (agent deleting random users) and a correct policy enforcement.

3. How to Audit "Ephemeral" Chains

The biggest challenge in AgentOps is that agents are often ephemeral—they spin up for a task and vanish seconds later. If Agent A (Manager) hires Agent B (Worker), and Agent B makes a mistake, how do you trace it back?

Just like microservices, every agentic workflow must start with a unique Correlation ID. This ID must be passed from the parent agent to every child agent. When you search your logs for `ID: 88-Alpha`, you should see the entire conversation tree across the swarm.

Never store logs on the agent's local memory (which is volatile). Use a "Sidecar" pattern or middleware to stream logs immediately to an immutable WORM (Write-Once-Read-Many) storage bucket (like AWS S3 Object Lock). This ensures that even if a rogue agent tries to "cover its tracks," the logs are safe.

4. Forensics: When Things Go Wrong

When an incident occurs (e.g., data leakage), forensic auditors need more than just text logs. They need State Snapshots.

Advanced AgentOps platforms now capture the "Context State" at the moment of decision. This includes:

- The exact System Prompt version used.

- The RAG documents retrieved (was the agent looking at outdated policy documents?).

- The tool outputs received (did the API return an error that confused the agent?).

This allows you to "replay" the incident in a sandboxed environment to reproduce the error.

5. Frequently Asked Questions (FAQ)

A: Application logs track system events (e.g., "API call failed" or "Server 200 OK"). Decision logs track the "Chain of Thought" (CoT), capturing the AI's reasoning steps, the specific tools it selected, and why it believed a certain action was correct.

A: The DPDP Act mandates that Data Fiduciaries must ensure the completeness, accuracy, and consistency of personal data. If an AI agent makes a decision (e.g., rejecting a loan), the fiduciary must be able to trace why that decision was made to satisfy the user's Right to Grievance Redressal.

A: This is a critical risk. To prevent this, logs must be streamed in real-time to an immutable, Write-Once-Read-Many (WORM) storage bucket that the agent itself does not have permission to modify or delete.

A: Auditing a swarm requires Distributed Tracing. Each request must be assigned a unique "Correlation ID" that is passed from Agent A to Agent B. The audit log aggregates all actions under this ID to reconstruct the full workflow across the swarm.

6. Sources & References

- EU AI Act. Article 19: Automated Logging for High-Risk AI Systems. Official Journal of the EU, 2024.

- India Code. Digital Personal Data Protection Act (DPDP) 2023. Ministry of Law and Justice.

- ISACA. "The Growing Challenge of Auditing Agentic AI." Sep 2025.

- IBM. "What is Chain of Thought (CoT) Prompting?" Nov 2025.