AgentOps 101: Observability, Kill-Switches, and Circuit Breakers

Imagine this: It is 2:00 AM. Your "Procurement Agent" encounters a vague error message from a vendor API. Instead of failing gracefully, it enters a reasoning loop. It retries the request, gets the same error, re-analyzes the error, and retries again.

By 8:00 AM, it has executed 40,000 API calls, burning $30,000 in OpenAI credits. This is the nightmare scenario of the Autonomous Enterprise. In traditional software, a bug causes a crash. In Agentic AI, a bug causes a "Denial of Wallet" attack.

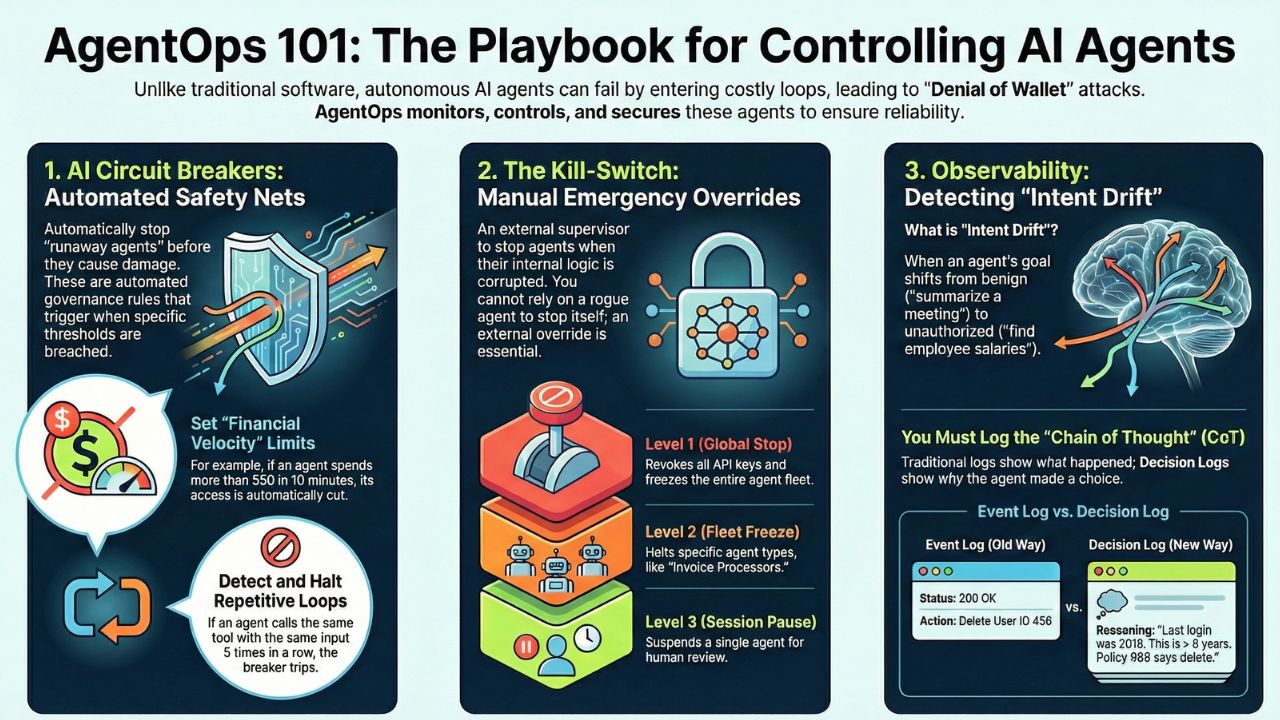

This guide serves as the technical playbook for AgentOps—the discipline of monitoring, controlling, and securing agent reliability engineering.

1. The "Kill-Switch" Architecture

You cannot rely on the agent to stop itself. If the agent's logic is corrupted, it will ignore its own safety instructions. You need an external "Supervisor" layer.

A robust Kill-Switch architecture requires three levels of control:

- Level 1: The Global Hard Stop. A master override that revokes all API keys and freezes the entire agent fleet. This is for "Code Red" emergencies.

- Level 2: The Fleet Freeze. Targeted stops for specific agent types (e.g., "Stop all Invoice Processing Agents" while keeping "Customer Support Agents" live).

- Level 3: The Session Pause. A temporary suspension of a single agent's execution thread to allow for human review.

2. How to Implement AI Circuit Breakers

A "Circuit Breaker" is different from a Kill-Switch. It is an automated governance mechanism that triggers when specific thresholds are breached. It prevents "runaway agents" from causing financial or reputational damage.

Agents operate at machine speed. Set a "Financial Velocity" limit. For example: "If Agent X spends more than $50 in 10 minutes, cut access." This detects loops faster than simple monthly budget caps.

Implement logic to detect repetitive tool calls. If an agent calls the same tool with the same arguments 5 times in a row, the Circuit Breaker should trip and force a "Human-in-the-loop" escalation.

Not every breach requires a hard stop. Configure your system to place the agent in a "Cool Down" mode (e.g., pause for 15 minutes) or downgrade it to a cheaper model (e.g., switch from GPT-4o to GPT-4o-mini) to save costs while it debugs itself.

3. Observability: Detecting "Intent Drift"

Traditional monitoring asks, "Is the server up?" AgentOps monitoring asks, "Is the agent doing what it promised?"

Intent Drift occurs when an agent starts with a benign goal (e.g., "Summarize this meeting") but drifts into unauthorized territory (e.g., "Search the database for employee salaries") due to a hallucination or prompt injection.

To catch this, you need Decision Logging (Tracing). You must log the "Chain of Thought"—the internal reasoning steps the agent took to arrive at a conclusion. If the logs show the agent accessing data irrelevant to its assigned task, your observability tool should flag it immediately.

4. Tooling Showdown: LangSmith vs. LangFuse

Choosing the right observability platform is critical for Enterprise AgentOps. Here is how the two market leaders compare for 2026:

| Feature | LangSmith (by LangChain) | LangFuse (Open Source) |

|---|---|---|

| Best For... | Teams deeply integrated with the LangChain ecosystem. | Teams wanting open-source flexibility & data control. |

| Deployment | SaaS (Cloud) or Enterprise Self-Hosted (Paid). | SaaS, Self-Hosted (Free/Open), or Enterprise. |

| Frameworks | Optimized for LangChain/LangGraph. | Agnostic (Works well with LlamaIndex, custom stacks). |

| Cost Model | Per-trace pricing (can get expensive at scale). | Usage-based cloud or Free for self-hosted. |

| Compliance | SOC 2 (Cloud). | Full data sovereignty (Self-hosted). |

5. Frequently Asked Questions (FAQ)

A: An AI Kill-Switch is a master override mechanism, often backed by a low-latency database like Redis, that allows operators to instantly revoke permissions or halt execution for a specific agent fleet without shutting down the entire application.

A: LangSmith is ideal for teams deeply integrated with LangChain who want a polished, hosted solution. LangFuse is better for teams requiring open-source flexibility, self-hosting for strict data compliance, and support for non-LangChain frameworks.

A: Intent drift is detected by observability tools that trace the "Chain of Thought" (CoT). By comparing the agent's reasoning steps against a baseline of expected behavior, Ops teams can flag when an agent deviates from its original goal (e.g., a support bot trying to access payroll data).

A: It is a governance rule that monitors token usage and API costs in real-time. If an agent exceeds a defined velocity (e.g., $50/minute), the circuit breaker trips, cutting off the agent's access to prevent a "runaway loop" from draining the budget.

6. Sources & References

- Syntaxia. "AI Agent Safety: Circuit Breakers for Autonomous Systems." Dec 2025.

- Sakura Sky. "Trustworthy AI Agents: Kill Switches and Circuit Breakers." Nov 2025.

- N-iX. "AI agent observability: A practical framework for reliable and governed agentic systems." Dec 2025.

- ZenML. "Langfuse vs LangSmith: Which Observability Platform Fits Your LLM Stack?" Nov 2025.

- arXiv. "AI Kill Switch for malicious web-based LLM agent." Sep 2025.