Managing Vibe Coders: The Framework You're Missing

- Defining the Persona: "Vibe coders" are developers who orchestrate AI models to generate complex logic based on intuition and prompts, often bypassing deep algorithmic understanding.

- The Leadership Gap: Traditional agile methodologies and QA pipelines are breaking down because they were built for manual syntax typing, not rapid, AI-generated code blocks.

- The Missing Guardrails: Successfully Managing "Vibe Coders" requires a structural shift from tracking "lines of code" to mandating "architectural peer reviews."

- Sprint Recalibration: You must fundamentally alter your Sprint Planning, sizing tickets based on AI orchestration effort rather than manual human effort.

- Outcome Over Output: Your metrics must pivot. High-performing AI-native teams measure cycle time reduction and bug introduction rates, not commit frequency.

The Reality of AI-Native Engineering

The software engineering landscape is fracturing at a fundamental level. As your organization accelerates into The Agentic Coding Shift, engineering managers are colliding with an entirely new developer archetype.

The traditional programmer, who painstakingly types syntax line by line, is rapidly being replaced. In their place is a new breed of developer relying on advanced AI agents to generate entire microservices in seconds.

This shift brings unprecedented speed, but it introduces terrifying architectural vulnerabilities. If your QA process feels like it is constantly on fire, you are likely failing at Managing "Vibe Coders".

This article outlines the exact leadership framework necessary to harness the speed of AI-assisted developers while rigorously protecting your enterprise codebase. We will break down how to restructure your agile workflows, adapt your sprint planning, and enforce the guardrails your team is currently missing.

What Exactly is a "Vibe Coder"?

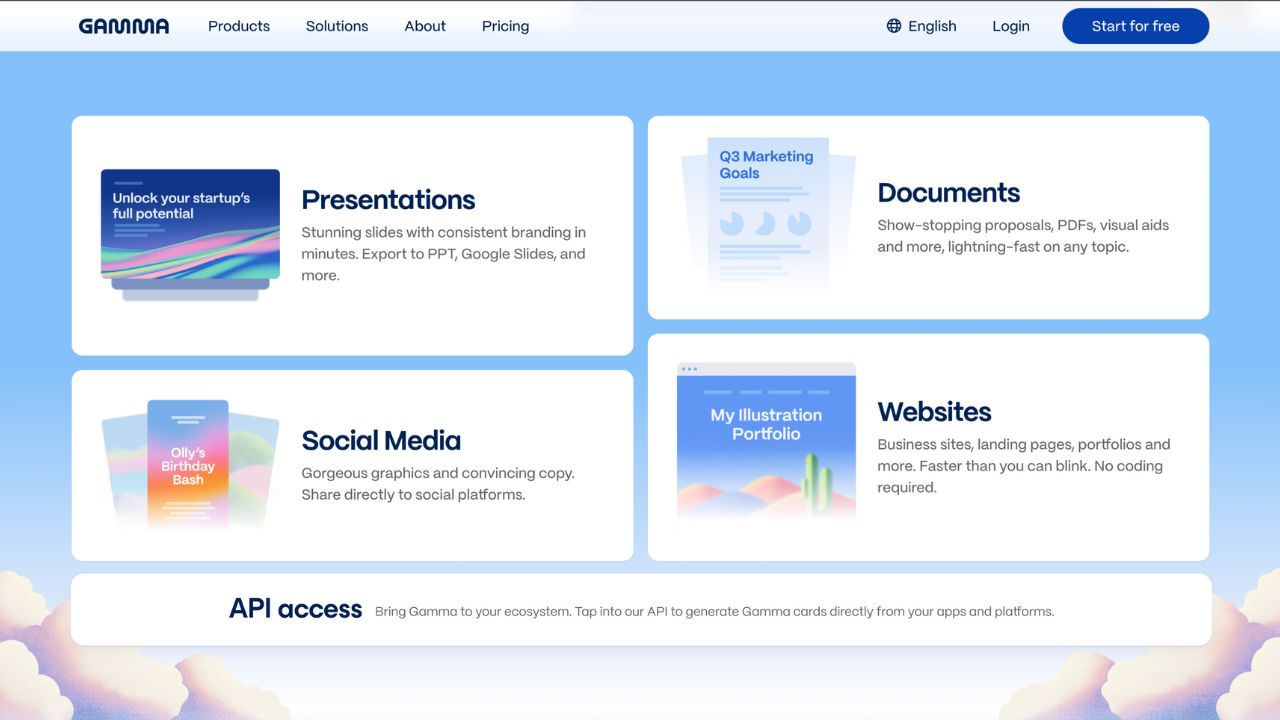

To govern this new era, you must first understand the psychology of the developer you are leading. The term refers to engineers who rely heavily on AI tools like GitHub Copilot, Cursor, or Devin to write their logic.

Core characteristics include:

- Prompt-First Workflow: They write natural language prompts before they write actual code.

- Intuitive Acceptance: They often accept large blocks of AI-generated code if it "looks right" or passes a basic localized unit test, without deeply inspecting the underlying algorithm.

- High Velocity, Low Context: They move incredibly fast but frequently lack the deep, systemic context of the legacy architecture they are modifying.

These developers are not necessarily lazy; they are simply leveraging the tools at their disposal to maximize output. However, without a dedicated leadership framework, this "vibe-based" acceptance of AI output rapidly accumulates invisible technical debt.

The Hidden Risks of Vibe Coding in Enterprise

When developers stop reading code and start exclusively prompting it, the integrity of your product is threatened. Traditional management assumes that if a developer authored the code, they understand how it works.

This is no longer a safe assumption. The most common systemic failures include:

- The Hallucination Loop: An AI agent introduces a subtle logical error. The developer, assuming the AI is correct, builds on top of the error, embedding it deep into the application architecture.

- Security Blind Spots: AI models frequently suggest outdated or vulnerable library dependencies. Vibe coders rarely audit these dependencies manually.

- The "Spaghetti" Effect: Because the AI generates isolated blocks of code, the overall system architecture becomes disjointed, lacking a cohesive, human-led design pattern.

To combat these risks, leaders must stop fighting the AI and start managing the human orchestrator.

Managing "Vibe Coders" Without Killing Velocity

The worst mistake a technical leader can make is banning AI tools. Banning them destroys your team's competitive advantage. Instead, you must implement the missing framework. Managing "Vibe Coders" requires a strict evolution of your engineering culture.

Phase 1: Enforce "The Documentation Discipline"

You cannot manage an AI-generated outcome if you do not understand the human intent behind it. Before a developer is allowed to prompt an AI agent for a feature, they must write down the exact specification.

The Documentation Discipline requires:

- A clear statement of the business logic.

- A mapped outline of the database schemas that will be touched.

- A predefined list of edge cases that the AI must handle.

By forcing the developer to document the architecture first, you shift their cognitive load. They stop being manual typists and start acting like entry-level system architects.

Phase 2: Mandatory Explainability in Code Reviews

If a developer uses AI to generate a pull request, they must be able to explain every single line of that PR during the review phase. This is the ultimate guardrail against sloppy vibe coding.

If a senior engineer asks, "What is the time complexity of this sorting algorithm?" and the junior developer responds, "I don't know, the AI generated it," the pull request must be rejected immediately.

This friction guarantees that developers read and internalize the code they are shipping.

Phase 3: AI-Assisted Quality Assurance

You must fight fire with fire. You cannot rely on manual human QA to test code that was generated at superhuman speeds. To maintain stability, you must integrate AI directly into your testing pipeline.

Automated AI QA should focus on:

- Syntax Audits: Scanning for known vulnerabilities and deprecated libraries before the code reaches a human reviewer.

- Automated Test Generation: Forcing the AI to write comprehensive unit and integration tests alongside the feature code.

- Dependency Mapping: Utilizing agents to verify that a change in one microservice does not cascade into a failure elsewhere in the repository.

Redefining Agile: Sprint Planning for AI Agents

Traditional Scrum and Agile frameworks are buckling under the weight of agentic coding. When you size a ticket using "Story Points," you are generally estimating the human effort required.

How do you size a ticket when an AI agent can complete it in four seconds?

Sizing Tickets for Orchestration

Your Sprint Planning must evolve. You are no longer estimating typing time; you are estimating orchestration and review time. A ticket that involves simple boilerplate generation should be sized aggressively low.

Conversely, a ticket that requires integrating an AI output into a fragile, undocumented legacy system must be sized higher. The complexity now lies in the integration and the QA, not the initial creation.

Redefining the "Definition of Done"

Your team's Definition of Done (DoD) must be updated immediately to reflect AI usage. A task is no longer "done" just because the AI's code compiles and passes local tests.

A modern Definition of Done must include:

- AI generation prompts documented in the ticket.

- Human-verified security audits of all suggested dependencies.

- Architectural sign-off ensuring the AI did not violate core enterprise design patterns.

By embedding these requirements into your Sprint Planning, you regain control over your team's velocity and ensure you are maximizing The ROI of Agentic Coding in Enterprise Teams.

Metrics: Tracking the Unseen

If you are evaluating your vibe coders based on commit frequency or lines of code, you are incentivizing catastrophic architectural bloat. AI makes it incredibly easy to generate massive pull requests that provide zero actual business value.

Focus on Cycle Time and Rejection Rates

You must pivot your engineering KPIs to measure actual outcomes. Monitor the "PR Rejection Rate." If a developer is continuously submitting massive AI-generated PRs that get rejected by senior engineers for architectural flaws, their vibe coding is costing the company money.

The ultimate metrics for AI-native teams include:

- Time-to-Merge: How quickly does an AI-generated feature pass human review and enter production?

- Production Bug Delta: Are we seeing an increase in subtle, logical bugs reaching the end-user?

- Compute Cost per Feature: Are developers burning through expensive AI API credits (ACUs) by forcing the AI into endless, unguided loops?

Conclusion

The era of manual syntax generation is ending, and the era of AI orchestration has arrived. Managing "Vibe Coders" is the most critical competency an engineering leader can develop in 2026.

If you allow developers to blindly trust AI outputs without strict, human-in-the-loop guardrails, your enterprise architecture will collapse under the weight of unseen technical debt. Implement the Documentation Discipline, mandate PR explainability, and completely overhaul your Sprint Planning to account for AI velocity.

By applying this missing framework, you can safely harness the unprecedented speed of agentic coding while maintaining the rigorous stability your enterprise demands.

Frequently Asked Questions (FAQ)

A vibe coder is an engineer who relies heavily on AI tools to generate logic and syntax, often accepting the output based on intuition or basic local testing rather than a deep, rigorous understanding of the underlying algorithms.

The primary risks include the rapid accumulation of technical debt, architectural "spaghetti" code, and severe security vulnerabilities. Because developers are not deeply inspecting the AI-generated logic, subtle hallucinations and outdated dependencies easily bypass traditional manual review.

You must enforce strict "explainability rules" during code reviews, requiring the human developer to explain every AI-generated line. Furthermore, teams must utilize AI-assisted testing pipelines to automatically generate unit tests and audit dependencies before manual human review begins.

Leaders must abandon "lines of code" or commit volume. Instead, track PR rejection rates, cycle time from prompt to production merge, and the delta in post-release production bugs to measure true business value and code stability.

No, it actually makes traditional, rigorous QA more critical than ever. While AI will automate basic syntax checking and unit test generation, human QA engineers are desperately needed to validate complex business logic, system architecture, and holistic user experiences that AI models cannot fully grasp.