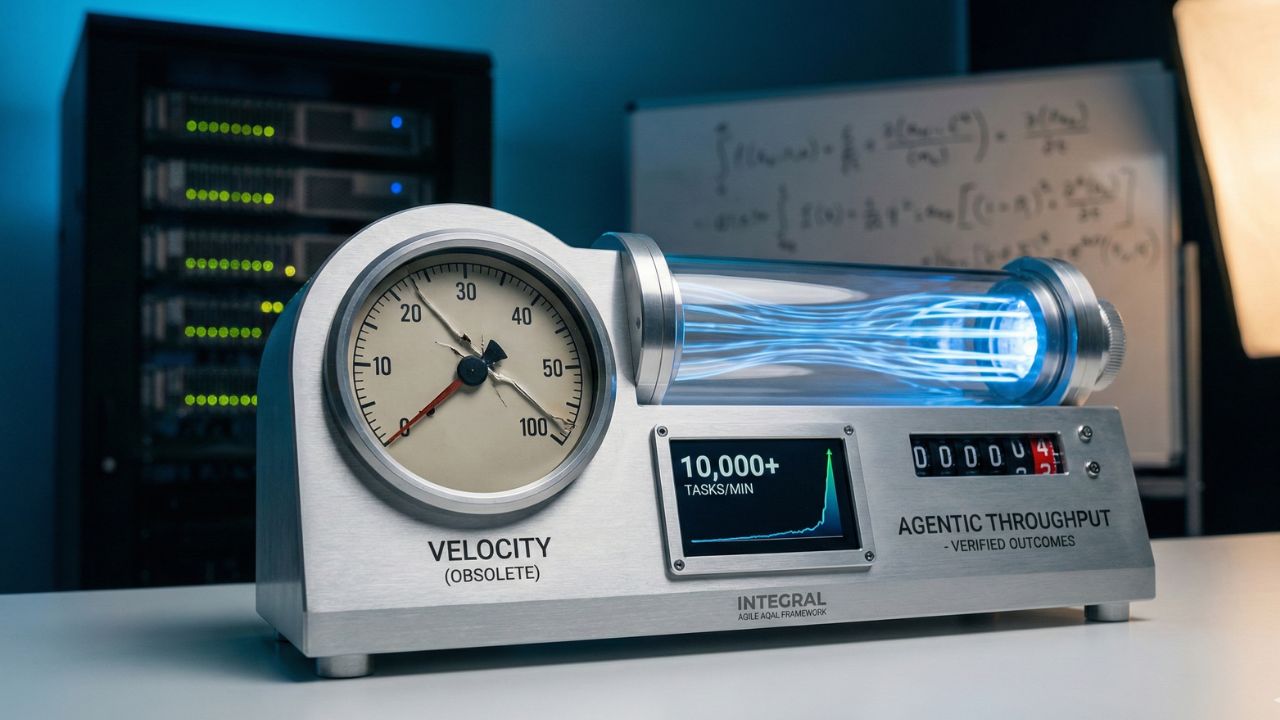

Measuring Agentic Throughput: Why "Velocity" is a Lying Metric in 2026

- Traditional Agile "Velocity" (story points per sprint) is meaningless when AI agents do the work.

- An AI agent can complete 100 story points in minutes, breaking the fundamental link between effort and time.

- The new metric for 2026 is "Agentic Throughput": the volume of high-quality, verified outcomes delivered by AI.

- You must now measure the cost and quality of output, not just the speed of delivery.

- The role of the Scrum Master shifts from tracking velocity to managing agent efficiency and error rates.

For decades, "Velocity" has been the holy grail of Agile measurement. It was a reliable proxy for team productivity, forecasting how many story points human developers could deliver in a sprint.

But in the era of autonomous AI agents, measuring agentic throughput has rendered traditional velocity obsolete. When a bot can generate a thousand lines of code or run ten thousand test cases in the time it takes a human to grab a coffee, the old charts don't just lie—they become dangerously misleading.

This deep dive is part of our extensive guide on the Agentic SDLC and the Integral Agile AQAL Framework, which explores how to redefine performance in an AI-native engineering world.

The Death of the Story Point

Story points were designed to estimate human effort. They accounted for complexity, uncertainty, and the cognitive load of a developer. AI agents, however, do not experience cognitive load.

An agentic system doesn't care if a task is a "1" or an "8" on the Fibonacci scale; it processes the prompt and executes the code. If you continue to use story points, your burndown charts will show a vertical drop at the start of every sprint, providing zero predictive value for stakeholders.

Defining the New Math: Agentic Throughput

Instead of effort, we must measure outcome. Measuring agentic throughput involves tracking the volume of "verified units of value" delivered by your agent fleets. This includes:

- Prompt-to-PR Velocity: The raw time taken from an initial requirement prompt to a generated Pull Request.

- Verification Success Rate: How many agent-generated outputs pass automated testing and security gates on the first attempt.

- Review Debt: The human time required to audit and approve agentic code—the most critical bottleneck in 2026.

The Human-Agent Handoff Efficiency

The total throughput of your SDLC is no longer limited by how fast the AI can code, but by how efficiently humans and agents interact. This is where human agent handoff efficiency becomes the deciding factor in project success.

High handoff efficiency means the AI understands the context provided by the human "AI Wrangler" perfectly, and the human can review the AI's output without spending hours deciphering "black box" logic.

Implementing the Agent Efficiency Score (AES)

In 2026, leading organizations are adopting the Agent Efficiency Score. This metric balances the speed of the agent against the "Token Cost" and the "Review Tax."

If an agent generates code in seconds but requires a Senior Developer three hours to fix its hallucinations, its AES is effectively zero. True measuring agentic throughput requires a holistic view of the entire lifecycle, including the human intervention required to make the output production-ready.

Frequently Asked Questions (FAQ)

What is "Agentic Throughput" and how is it calculated?

Agentic Throughput measures the volume of completed, high-quality outcomes delivered by AI agents. It is often calculated as the number of verified, successful task completions divided by the compute cost or time utilized.

How do you measure the productivity of an AI agent?

You measure its output quality (error rate, hallucination frequency), its speed (tasks per minute), and its cost-efficiency (token usage per successful outcome). You do not measure it with human story points.

What replaces story points in AI software development?

Story points are replaced by outcome-based metrics like "Verified Units Delivered" or "Automated Tasks Completed." The focus shifts from measuring the estimated effort to measuring the actual, tangible output of the autonomous system.

Conclusion

The transition is jarring but necessary. By clinging to outdated metrics like velocity, you risk blinding yourself to the true capabilities—and costs—of your AI workforce.

By successfully measuring agentic throughput, you gain the clarity needed to optimize your autonomous fleets. Stop measuring how fast the wheels are spinning, and start measuring how much ground you are actually covering.