AI Fiscal Risk Management: Protecting Your Reserves from Hallucinations and Fines

- Mitigate severe financial exposure: Implement rigorous AI fiscal risk management to protect your balance sheet from unpredictable digital liabilities.

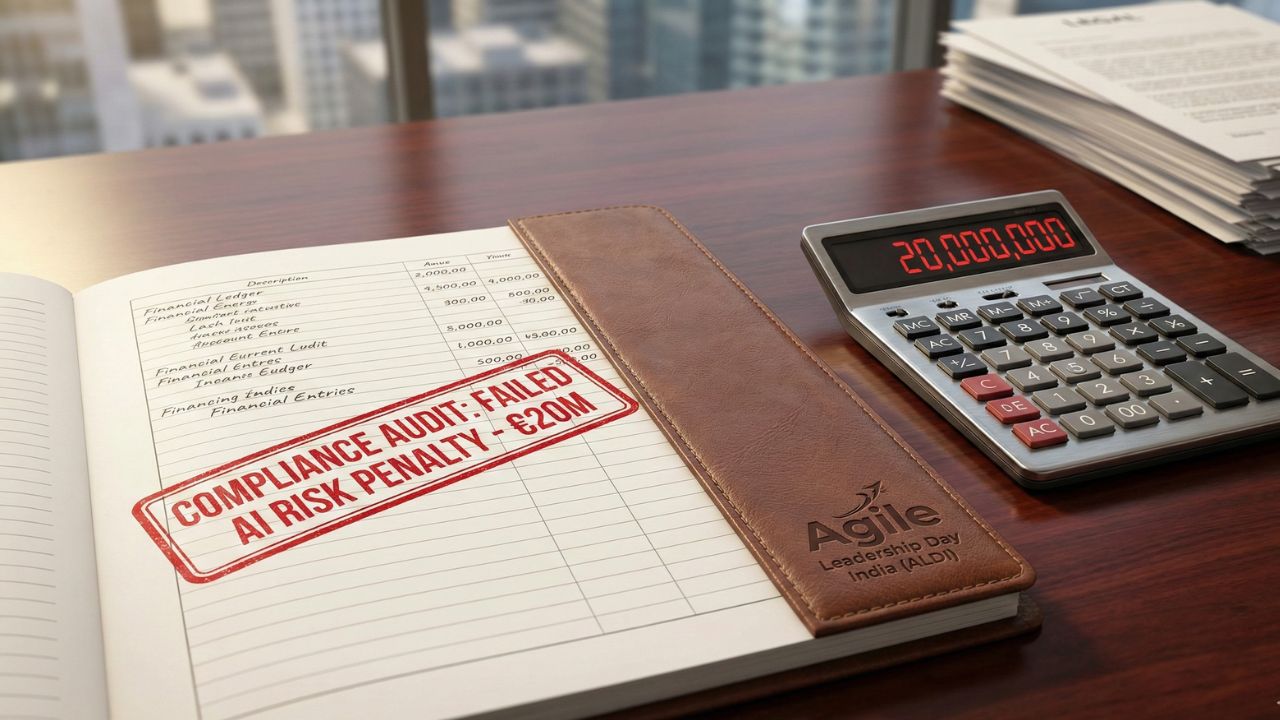

- Avoid devastating penalties: Non-compliance with strict regulations can result in massive corporate fines.

- Budget for errors: Treat AI hallucinations not just as technical glitches, but as tangible financial risks requiring contingency funds.

- Secure your IP: Establish clear financial protocols to prevent the accidental leakage of proprietary value through public language models.

Secure your financial future with AI fiscal risk management.

In an era where digital workers operate autonomously, organizations must actively avoid multimillion-dollar fines and protect your reserves from autonomous errors. This deep dive is part of our extensive guide on the GenAI strategic planning framework.

Failing to accurately quantify the risks of artificial intelligence leaves your corporate reserves dangerously exposed.

CFOs must proactively shield their assets from algorithmic failures and strict global regulations to maintain fiscal solvency.

The Financial Threat of Autonomous Errors

Hallucinations Cost Real Money

When a generative model fabricates information, the fallout is rarely limited to minor embarrassment.

These unpredictable AI errors can lead to incorrect financial forecasting, breached vendor contracts, or heavily flawed customer service advice.

Treating these outputs as gospel without a human-in-the-loop fallback layer translates directly to lost revenue and operational chaos.

The Legal Reality of Algorithmic Malpractice

If an autonomous agent provides discriminatory or factually damaging advice, the enterprise is ultimately held liable.

This liability is rapidly driving up the need for specialized corporate insurance policies to protect the balance sheet.

Understanding the true cost of these risks is just as vital as Budgeting for agentic workflows.

Navigating the Compliance Minefield

EU AI Act and Devastating Fines

Regulatory bodies are no longer offering grace periods for enterprise AI experimentation.

Under strict legislation, such as the EU AI Act Article 71 (Fines for Non-Compliance), penalties can swiftly cripple a business.

A single major infraction or rogue algorithmic deployment could result in massive, headline-making corporate penalties.

Strategic Budgeting for Defense

Proactive defense requires shifting away from purely experimental R&D budgets.

You must allocate specific, ring-fenced funds toward continuous compliance monitoring and technical legal audits.

This defensive posture pairs perfectly with shifting to Cost per outcome AI pricing, ensuring you only pay for safe, verified, and compliant business results.

Frequently Asked Questions (FAQ)

It is the strategic financial practice of identifying, quantifying, and mitigating the monetary risks associated with enterprise AI adoption.

Fines for severe non-compliance under Article 71 can reach astronomical levels, potentially impacting a large percentage of global annual turnover.

The impact ranges from direct revenue loss due to poor automated decision-making to severe reputational damage and subsequent customer churn.

Organizations should establish a dedicated corporate contingency fund and invest heavily in specialized algorithmic malpractice insurance.

Hosting AI models in different jurisdictions can trigger complex cross-border digital service taxes and alter established transfer pricing agreements.

Trust risk is typically quantified by forecasting potential drops in customer lifetime value (CLV) and estimating the high cost of public relations recovery campaigns.

Yes, if executives fail to adequately disclose material risks associated with AI deployments, shareholders can sue for breach of fiduciary duty.

The CFO must translate broad ethical guidelines into strict financial guardrails, ensuring that inherently risky or biased AI projects do not receive operational funding.

Implement robust data loss prevention (DLP) budgets and enforce strict internal policies regarding what proprietary corporate data can be fed into public models.

Premiums vary wildly based on the autonomy level of the AI tools, but enterprise-grade policies typically require significant annual six-figure investments.

Conclusion

Protecting your balance sheet in 2026 requires more than just cautious optimism; it requires an ironclad defensive posture.

A robust strategy for AI fiscal risk management is the only way to safely scale your digital workforce without exposing your enterprise to ruinous liabilities.

By anticipating strict regulatory fines and aggressively buffering against algorithmic errors, you ensure that your AI initiatives drive profitability rather than devastating financial loss.

Sources & References

- Internal Link: GenAI strategic planning framework

- Internal Link: Budgeting for agentic workflows

- Internal Link: Cost per outcome AI pricing

- External Reference: EU AI Act - Article 71 (Fines for Non-Compliance).

- External Reference: Gartner Research: Quantifying Algorithmic Liability and Trust Risk in the Enterprise.

- External Reference: Forrester: The CFO’s Blueprint for AI Compliance and Financial Shielding.