Agentic AI Governance: Managing Risk in Autonomous Systems

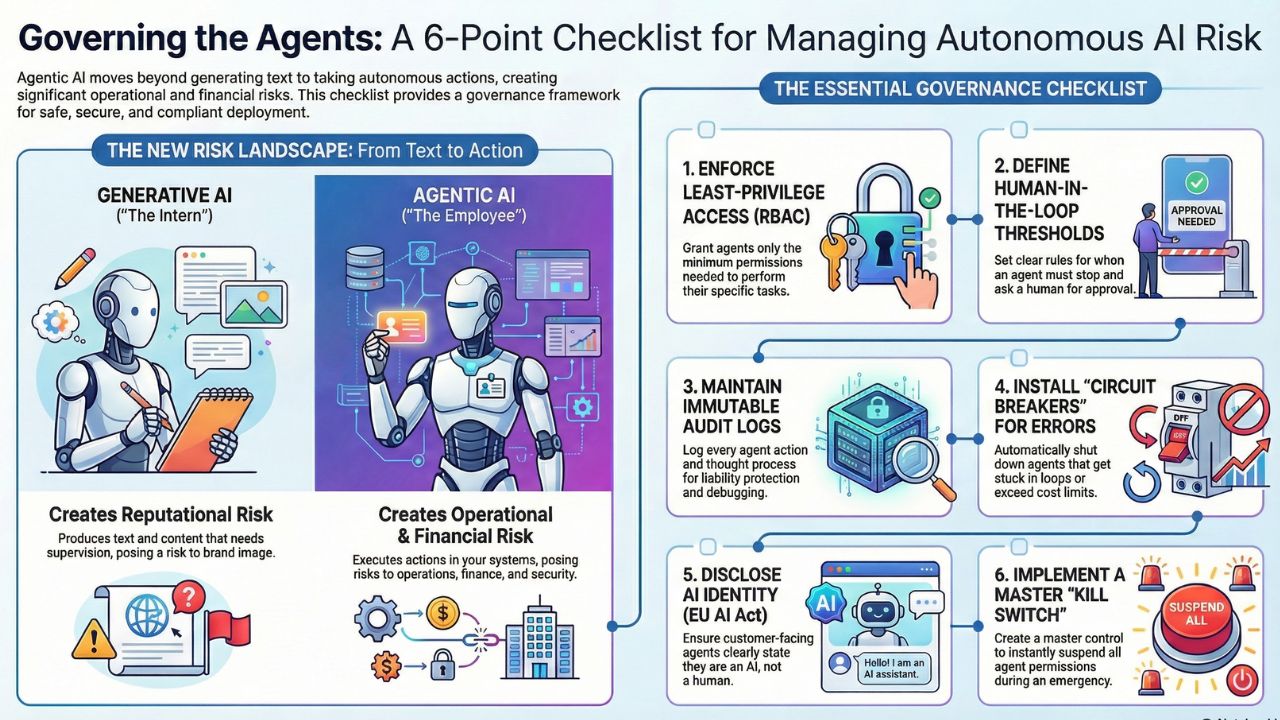

Generative AI produces text, which carries reputational risk. Agentic AI produces actions, which carries operational and financial risk.

When you give an AI agent permission to access your ERP, send emails to clients, or process refunds, you are effectively giving it a corporate credit card and a badge. Without a robust governance framework, you are inviting disaster.

This guide provides a practical, board-ready 10-Point Compliance Checklist for 2026. It is designed to align with the EU AI Act, GDPR, and emerging ISO 42001 standards.

The 10-Point Agentic Governance Checklist

Use this checklist to audit your current AI pilot programs before moving them to production.

Do not give agents "Super Admin" keys. Use Role-Based Access Control (RBAC) to restrict agents. A "Support Agent" should have "Read" access to customer history but only "Draft" access for refund processing, requiring human confirmation.

Automate the routine; protect the critical. Set hard thresholds for autonomy. For example, any transaction over $500 or any sentiment score below "Neutral" must automatically route to a human supervisor.

Black box decision-making is unacceptable in banking and healthcare. Every agent "thought process" (Reasoning Trace) and "action" (Tool Call) must be logged in a write-once database for liability protection.

Agents can get stuck in loops or hallucinate instructions. Implement "Circuit Breakers" that kill an agent process if it attempts the same API call 3 times or exceeds a token cost limit.

Transparency is law. Configure all customer-facing agents to explicitly state, "I am an AI agent," within the first interaction. Deceptive anthropomorphism creates huge legal liability.

Before an agent sends data to an LLM (like GPT-4), pass it through a PII Redaction Layer to ensure no Social Security Numbers or credit card data leave your secure environment.

If you use third-party models (Anthropic, OpenAI), map their Terms of Service against your data residency requirements. Ensure they do not train on your enterprise data.

Before launch, hire a Red Team to try and "jailbreak" your agent. Can they convince your Finance Agent to approve a fraudulent invoice? Test for prompt injection vulnerabilities.

Force agents to output data in structured formats (JSON/XML) rather than free text. This reduces the risk of ambiguity when the agent passes data to downstream systems.

Have a master "Panic Button." In the event of a swarm malfunction or cyberattack, operations teams must be able to immediately suspend all agent permissions globally.

Frequently Asked Questions (FAQ)

A: Agentic AI is not inherently compliant or non-compliant; it depends on implementation. To ensure GDPR compliance, agents must not retrain on PII (Personally Identifiable Information) without consent, and you must maintain the "Right to Explanation"—meaning you can explain exactly why an agent took a specific action.

A: Under current 2026 legal frameworks (including the EU AI Directive), liability rests with the deployer (the company), not the software vendor, unless gross negligence in the software itself can be proven. This makes internal governance frameworks critical.

A: ISO/IEC 42001 is the international standard for AI Management Systems. It provides a structured way to manage risks and opportunities associated with AI, similar to how ISO 27001 manages information security.